Notes of MDA Sem 2, Programing With R TextAnalytics - Study Material

Page 1 :

Analytics Edge: Unit 5 - An Introduction, to Text Analytics, Sulman Khan, October 26, 2018, , TURNING TWEETS INTO KNOWLEDGE, Twitter, •, , Twitter is a social networking and communication website founded in 2006, , •, , Users share and send messages that can be no longer than 140 characters long, , •, , One of the Top 10 most-visited sites on the internet, , Impact of Twitter, •, , Use by protestors across the world, , •, , Natural disaster notification, tracking of diseases, , •, , Companies will invest more than $120 billion by 2015 on analytics, hardware, software and services, , •, , Celebrities, politicians, and companies connect with fans and customers, , Understanding People, •, , Many companies maintain online presences, , •, , Managing public perception in age of instant communication essential, , •, , How can we use analytics to address this?, , Using Text as Data, •, , •, , Until now, our data has typically been, o Structured, o Numerical, o Categorical, Tweets are, o Loosely structured, o Textual, o Poor spelling, non-traditional grammar, o Multilingual, , Text Analytics, •, , We have discussed why people care about textual data, but how do we handle it?, , •, , Humans can’t keep up with Internet-scale volumes of data, , How Can Computers Help?

Page 2 :

•, , Computers need to understand text, , •, , This field is called Natural Language Processing, , Why is it Hard?, •, , Computers need to understand text, , •, , Ambiguity:, o “I put my bag in the car. It is large and blue”, o “It” = bag? “It” = car?, , Creating the Dataset, •, , •, , Twitter data is public ally available, o Scrape website, or, o Use special interface for programmers (API), o Sender of tweet may be useful, but we will ignore, Need to construct the outcome variable for tweets, o Thousands of tweets, o Two people may disagree over the correct classification, , A Bag of Words, •, , Fully understanding text is difficult, , •, , Simpler approach, , •, , o ** Count the number of times each words appears**, “This course is great. I would recommend this course to my friends.”, , WORDS, , Numberoftimes, , THIS, , 2, , COURSE, , 2, , GREAT, , 1, , …, , …, , WOULD, , 1, , FRIENDS, , 1, , A simple but Effective Approach, •, , One feature for each word - a simple approach, but effective, , •, , Used as a baseline in text analytics projects and natural language processing, , •, , Not the whole story though - preprocessing can dramatically improve performance!, , Text Analytics in General

Page 3 :

•, , Selecting the specific features that are relevant in the application, , •, , Applying problem specific knowledge can get better results, o Meaning of symbols, o Features like number of words, , The Analytics Edge, •, , Analytical sentiment analysis can replace more labor-intensive methods like polling, , •, , Text analytics can deal with the massive amounts of unstructured data being generated on the internet, , •, , Computers are becoming more and more capable of interacting with humans and performing human, tasks, , Preprocessing in R, Read in the data, # Load in the data, tweets = read.csv("tweets.csv", stringsAsFactors=FALSE), # Output the string, str(tweets), ## 'data.frame':, , 1181 obs. of, , 2 variables:, , ## $ Tweet: chr "I have to say, Apple has by far the best customer care servic, e I have ever received! @Apple @AppStore" "iOS 7 is so fricking smooth & beautif, ul!! #ThanxApple @Apple" "LOVE U @APPLE" "Thank you @apple, loving my new iPhone, 5S!!!!! #apple #iphone5S pic.twitter.com/XmHJCU4pcb" ..., ##, , $ Avg, , : num, , 2 2 1.8 1.8 1.8 1.8 1.8 1.6 1.6 1.6 ..., , Create dependent variable, # Create the dependent variable, tweets$Negative = as.factor(tweets$Avg <= -1), # Tabulate the negative tweets, z = table(tweets$Negative), kable(z), , Var1, FALSE, TRUE, , Install new packages, library(tm)

Page 4 :

library(SnowballC), , Create corpus, # Create Corpus, corpus = VCorpus(VectorSource(tweets$Tweet)), , # Look at corpus, corpus, ## <<VCorpus>>, ## Metadata:, ## Content:, , corpus specific: 0, document level (indexed): 0, documents: 1181, , corpus[[1]]$content, ## [1] "I have to say, Apple has by far the best customer care service I have ev, er received! @Apple @AppStore", , Convert to lower-case, # Conver to lower-case, corpus = tm_map(corpus, content_transformer(tolower)), , corpus[[1]]$content, ## [1] "i have to say, apple has by far the best customer care service i have ev, er received! @apple @appstore", , Remove punctuation, # Remove punctuation, corpus = tm_map(corpus, removePunctuation), , corpus[[1]]$content, ## [1] "i have to say apple has by far the best customer care service i have eve, r received apple appstore", , Look at stop words, # Examine stop words, stopwords("english")[1:10], ## [1] "i", "me", "ours", "ourselves" "you", , Remove stopwords and apple, # Remove stop words, , "my", , "myself", "your", , "we", , "our"

Page 5 :

corpus = tm_map(corpus, removeWords, c("apple", stopwords("english"))), , corpus[[1]]$content, ## [1] ", , say, , far, , best customer care service, , ever received, , appstore", , Stem document, # Stem document, corpus = tm_map(corpus, stemDocument), , corpus[[1]]$content, ## [1] "say far best custom care servic ever receiv appstor", , Create matrix, # Create the document matrix, frequencies = DocumentTermMatrix(corpus), , frequencies, ## <<DocumentTermMatrix (documents: 1181, terms: 3289)>>, ## Non-/sparse entries: 8980/3875329, ## Sparsity, , : 100%, , ## Maximal term length: 115, ## Weighting, , : term frequency (tf), , Look at matrix, # Look at the matrix, inspect(frequencies[1000:1005,505:515]), ## <<DocumentTermMatrix (documents: 6, terms: 11)>>, ## Non-/sparse entries: 1/65, ## Sparsity, , : 98%, , ## Maximal term length: 9, ## Weighting, , : term frequency (tf), , ## Sample, , :, , ##, , Terms, , ## Docs, ld, , cheapen cheaper check cheep cheer cheerio cherylcol chief chiiiiqu chi, , ##, 0, , 1000, , 0, , 0, , 0, , 0, , 0, , 0, , 0, , 0, , 0, , ##, 0, , 1001, , 0, , 0, , 0, , 0, , 0, , 0, , 0, , 0, , 0

Page 6 :

##, 0, , 1002, , 0, , 0, , 0, , 0, , 0, , 0, , 0, , 0, , 0, , ##, 0, , 1003, , 0, , 0, , 0, , 0, , 0, , 0, , 0, , 0, , 0, , ##, 0, , 1004, , 0, , 0, , 0, , 0, , 0, , 0, , 0, , 0, , 0, , ##, 0, , 1005, , 0, , 0, , 0, , 0, , 1, , 0, , 0, , 0, , 0, , Check for sparsity, # Check sparsity, findFreqTerms(frequencies, lowfreq=20), ## [1] "android", pl", "buy", ## [9] "can", nt", "googl", , "anyon", , "app", , "back", , "batteri", , "cant", "fingerprint", , "freak", , ## [17] "ios7", hone5", mo", "itun", , "iphone5c", , ## [25] "just", ok", "microsoft", , "love", , "come", , "ipad", , "iphon", , "like", , ## [41] "realli", y", "time", , "releas", "store", , ## [49] "twitter", a", "work", , "want", , "now", , "samsung", , "use", "well", , # Remove sparse terms, sparse = removeSparseTerms(frequencies, 0.995), sparse, ## <<DocumentTermMatrix (documents: 1181, terms: 309)>>, , ## Maximal term length: 20, ## Weighting, , : term frequency (tf), , Convert to a data frame, # Convert to a data frame, , "sa, "think", , Remove sparse terms, , : 99%, , "on, "promo", , "thank", "updat", , ## Sparsity, , "lo, "market", , "pleas", , ## Non-/sparse entries: 4669/360260, , "ip, "ipodplayerpro, , "lol", "make", , "new", , "do, "get", , "ipod", , ## [33] "need", e", "phone", "promoipodplayerpromo", , "ap, "better", , "vi, "will"

Page 8 :

Evaluate the performance of the model, # Make predictions using the CART Model, predictCART = predict(tweetCART, newdata=testSparse, type="class"), # Tabulate the negative tweets in the testing set vs the predictions, a = table(testSparse$Negative, predictCART), kable(a), , FALSE, , TRUE, , FALSE, , 294, , 6, , TRUE, , 37, , 18, , Compute accuracy, # Compute Accuracy, sum(diag(a))/(sum(a)), ## [1] 0.8788732, (294+18)/(294+6+37+18), ## [1] 0.8788732

Page 9 :

Baseline accuracy, # Tabulate baseline accuracy, a = table(testSparse$Negative), kable(a), , Var1, , Freq, , FALSE, , 300, , TRUE, , 55, , a[1]/(sum(a)), ##, , FALSE, , ## 0.8450704, 300/(300+55), ## [1] 0.8450704, , Random forest model, # Implement Random Forest model, library(randomForest), set.seed(123), tweetRF = randomForest(Negative ~ ., data=trainSparse), , Make predictions:, # Make predictions using the RF model, predictRF = predict(tweetRF, newdata=testSparse), # Tabulate the negative tweets in the testing set vs the predictions, table(testSparse$Negative, predictRF), ##, , predictRF, , ##, , FALSE TRUE, , ##, , FALSE, , ##, , TRUE, , 293, , 7, , 34, , 21, , a = table(testSparse$Negative, predictRF), kable(a), , FALSE, , FALSE, , TRUE, , 293, , 7

Page 10 :

FALSE, , TRUE, , 34, , 21, , TRUE, , Random Forest Accuracy, # Compute the accuracy, sum(diag(a))/(sum(a)), ## [1] 0.884507, (293+21)/(293+7+34+21), ## [1] 0.884507, , Man vs Machine - IBM Watson, A Grand Challenge, •, , In 2004, IBM Vice President Charles Lickel and co- workers were having dinner at a restaurant, , •, , All of a sudden, the restaurant fell silent, , •, , Everyone was watching the game show Jeopardy! on the television in the bar, , •, , A contestant, Ken Jennings, was setting the record for the longest winning streak of all time (75 days), , •, , Why was everyone so interested?, o Jeopardy! is a quiz show that asks complex and clever questions (puns, obscure facts,, uncommon words), o Originally aired in 1964, o A huge variety of topics, o Generally viewed as an impressive feat to do well, , The Challenge Begins, •, , In 2005, a team at IBM Research started creating a computer that could compete at Jeopardy!, , •, , Six years later, a two-game exhibition match aired on television, o The winner would receive $1,000,000, , The Contestants, •, •, •, , Ken Jennings, o Longest winning streak of 75 days, Brad Rutter, o Biggest money winner of over $3.5 million, Watson, o A supercomputer with 3,000 processors and a database of 200 million pages of information, , The Game of Jeopardy!, •, , Three rounds per game, o Jeopardy, o Double Jeopardy (dollar values doubled), o Final Jeopardy (wager on response to one question)

Page 11 :

•, •, , Each round has five questions in six categories, o Wide variety of topics (over 2,500 different categories), Each question has a dollar value - the first to buzz in and answer correctly wins the money, o If they answer incorrectly they lose the money, , Example Round, , Jeopardy! Questions, •, , Cryptic definitions of categories and clues, , •, , Answer in the form of a question, o Q: Mozart’s last and perhaps most powerful symphony shares its name with this planet., o A: What is Jupiter?, o Q: Smaller than only Greenland, it’s the world’s second largest island., o A: What is New Guinea?, , Why is Jeopardy Hard?, •, , Wide variety of categories, purposely made cryptic, , •, , Computers can easily answer precise questions, , •, , Understanding natural language is hard, o Where was Albert Einstein born?, o Suppose you have the following information:, ▪ “One day, from his city views of Ulm, Otto chose a water color to send to Albert Einstein, as a remembrance of his birthplace.”, , Using Analytics, •, •, •, , Watson received each question in text form, o Normally, players see and hear the questions, IBM used analytics to make Watson a competitive player, Used over 100 different techniques for analyzing natural language, finding hypotheses, and ranking, hypotheses, , Watson’s Database and Tools, •, •, , A massive number of data sources, o Encyclopedias, texts, manuals, magazines, Wikipedia, etc., Lexicon, o Describes the relationship between different words

Page 12 :

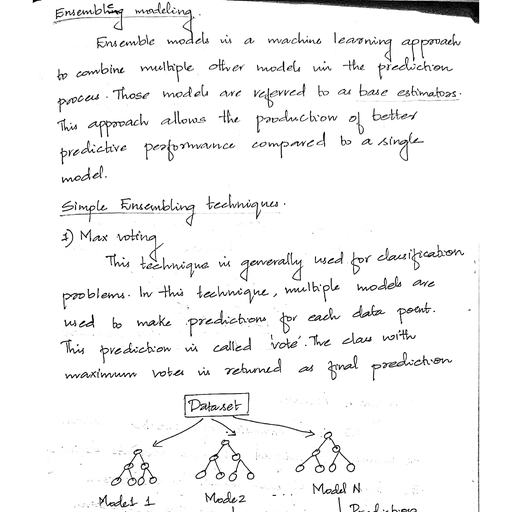

•, , o Ex: “Water” is a “clear liquid” but not all “clear liquids” are “water”, Part of speech tagger and parser, o Identifies functions of words in text, o Ex: “Race” can be a verb or a noun, o He won the race by 10 seconds., o Please indicate your race., , How Watson Works, •, •, •, •, , Step 1: Question Analysis, o Figure out what the question is looking for, Step 2: Hypothesis Generation, o Search information sources for possible answers, Step 3: Scoring Hypotheses, o Compute confidence levels for each answer, Step 4: Final Ranking, o Look for a highly supported answer, , Progress from 2006 - 2010, , IBM Watson’s progress from 2006 to 2010, , The Results, Games, , KenJennings, , BradRutter, , Watson, , Game 1, , $4,800, , $10,400, , $35,734, , Game 2, , $19,200, , $11,200, , $41,413, , Total, , $24,000, , $21,600, , $77,147, , The Analytics Edge, •, •, , Combine many algorithms to increase accuracy and confidence, o Any one algorithm wouldn’t have worked, Approach the problem in a different way than how a human does

Page 13 :

•, , o Hypothesis generation, Deal with massive amounts of data, often in unstructured form, o 90% of data is unstructured, , Analytics Edge: Unit 5 - Predictive, Coding, Sulman Khan, October 26, 2018, , Bringing Text Analytics to the Courtroom, Enron Corporation, •, , U.S. energy company from Houston, Texas, , •, , Produced and distributed power, , •, , Market capitalization exceeded $60 billion, , •, , Forbes: Most Innovative U.S. Company, 1996-2001, , •, , Widespread accounting fraud exposed in 2001, o Led to bankruptcy, the largest ever at that time, o Led major accounting firm Arthur Andersen to dissolve, Symbol of corporate corruption, , •, , California Energy Crsis, •, , California is most populous state in United States, , •, , In 2000-2001, plagued by blackouts despite having plenty of power plants, , •, , Enron played a key role in causing crisis, o Reduced supply to state to cause price spikes, o Made trades to profit from the market instability, Federal Energy Regulatory Commission (FERC) investigated Enron’s involvement, o Eventually led to $1.52 billion settlement, o Topic of today’s recitation, , •, , The eDiscovery Problem, •, , Enron had millions of electronic files, , •, , Leads to the eDiscovery problem: how we find files relevant to a lawsuit?, o In legal parlance, searching for responsive documents, Traditionally, keyword search followed by manual review, o Tedious process, o Expensive, time consuming, More recently: predictive coding (technology-assisted review), o Manually label some of the documents to train models, o Apply models to much larger set of documents, , •, •, , The Enron Corpus, •, , FERC publicly released emails from Enron

Page 14 :

•, , >600,000 emails, 158 users (mostly senior management), , •, , Largest publicly available set of emails, , •, , Dataset we will use for predictive coding, , •, , We will use labeled emails from the 2010 Text Retrieval Conference Legal Track, o email - text of the message, o responsive - does email relate to energy schedules or bids?, , Predictive Coding Today, •, •, , In legal system, difficult to change existing practices, o System based past precedent, o eDiscovery historically performed by keyword search coupled with manual review, 2012 U.S. District Court ruling: predictive coding is legitimate eDiscovery tool, , •, , Use likely to expand in coming years, , Enron Dataset in R, Load the dataset, # Load the dataset, emails = read.csv("energy_bids.csv", stringsAsFactors=FALSE), # Output the string, str(emails), ## 'data.frame':, , 855 obs. of, , 2 variables:, , ## $ email, : chr "North America's integrated electricity market requires c, ooperation on environmental policies Commission for Env"| __truncated__ "FYI ----Original Message----- From: \t\"Ginny Feliciano\" <

[email protected]>@E, NRON [mailto:IMCEANOTES-"| __truncated__ "14:13:53 Synchronizing Mailbox 'Kean,, Steven J.' 14:13:53 Synchronizing Hierarchy 14:13:53 Synchronizing Favori"| __tr, uncated__ "^ ----- Forwarded by Steven J Kean/NA/Enron on 03/02/2001 12:27 PM ----

[email protected] Sent by: "| __truncated__ ..., ##, , $ responsive: int, , 0 1 0 1 0 0 1 0 0 0 ..., , Look at emails, # Examine emails, emails$email[1], ## [1] "North America's integrated electricity market requires cooperation on en, vironmental policies Commission for Environmental Cooperation releases working p, aper on North America's electricity market Montreal, 27 November 2001 -- The Nor, th American Commission for Environmental Cooperation (CEC) is releasing a workin, g paper highlighting the trend towards increasing trade, competition and cross-b, order investment in electricity between Canada, Mexico and the United States. It, is hoped that the working paper, Environmental Challenges and Opportunities in t, he Evolving North American Electricity Market, will stimulate public discussion, around a CEC symposium of the same title about the need to coordinate environmen, tal policies trinationally as a North America-wide electricity market develops., The CEC symposium will take place in San Diego on 29-30 November, and will bring, together leading experts from industry, academia, NGOs and the governments of Ca, nada, Mexico and the United States to consider the impact of the evolving contin, ental electricity market on human health and the environment. \"Our goal [with t, he working paper and the symposium] is to highlight key environmental issues tha

Page 15 :

t must be addressed as the electricity markets in North America become more and, more integrated,\" said Janine Ferretti, executive director of the CEC. \"We wan, t to stimulate discussion around the important policy questions being raised so, that countries can cooperate in their approach to energy and the environment.\", The CEC, an international organization created under an environmental side agree, ment to NAFTA known as the North American Agreement on Environmental Cooperation, , was established to address regional environmental concerns, help prevent poten, tial trade and environmental conflicts, and promote the effective enforcement of, environmental law. The CEC Secretariat believes that greater North American coop, eration on environmental policies regarding the continental electricity market i, s necessary to: * protect air quality and mitigate climate change, * minimize, the possibility of environment-based trade disputes, * ensure a dependable supp, ly of reasonably priced electricity across North America * avoid creation of po, llution havens, and * ensure local and national environmental measures remain e, ffective. The Changing Market The working paper profiles the rapid changing Nort, h American electricity market. For example, in 2001, the US is projected to expo, rt 13.1 thousand gigawatt-hours (GWh) of electricity to Canada and Mexico. By 20, 07, this number is projected to grow to 16.9 thousand GWh of electricity. \"Over, the past few decades, the North American electricity market has developed into a, complex array of cross-border transactions and relationships,\" said Phil Sharp,, former US congressman and chairman of the CEC's Electricity Advisory Board. \"We, need to achieve this new level of cooperation in our environmental approaches as, well.\" The Environmental Profile of the Electricity Sector The electricity sect, or is the single largest source of nationally reported toxins in the United Stat, es and Canada and a large source in Mexico. In the US, the electricity sector em, its approximately 25 percent of all NOx emissions, roughly 35 percent of all CO2, emissions, 25 percent of all mercury emissions and almost 70 percent of SO2 emis, sions. These emissions have a large impact on airsheds, watersheds and migratory, species corridors that are often shared between the three North American countri, es. \"We want to discuss the possible outcomes from greater efforts to coordinat, e federal, state or provincial environmental laws and policies that relate to th, e electricity sector,\" said Ferretti. \"How can we develop more compatible envi, ronmental approaches to help make domestic environmental policies more effective, ?\" The Effects of an Integrated Electricity Market One key issue raised in the, paper is the effect of market integration on the competitiveness of particular f, uels such as coal, natural gas or renewables. Fuel choice largely determines env, ironmental impacts from a specific facility, along with pollution control techno, logies, performance standards and regulations. The paper highlights other impact, s of a highly competitive market as well. For example, concerns about so called, \"pollution havens\" arise when significant differences in environmental laws or, enforcement practices induce power companies to locate their operations in juris, dictions with lower standards. \"The CEC Secretariat is exploring what additiona, l environmental policies will work in this restructured market and how these pol, icies can be adapted to ensure that they enhance competitiveness and benefit the, entire region,\" said Sharp. Because trade rules and policy measures directly in, fluence the variables that drive a successfully integrated North American electr, icity market, the working paper also addresses fuel choice, technology, pollutio, n control strategies and subsidies. The CEC will use the information gathered du, ring the discussion period to develop a final report that will be submitted to t, he Council in early 2002. For more information or to view the live video webcast, of the symposium, please go to: http://www.cec.org/electricity. You may download, the working paper and other supporting documents from: http://www.cec.org/progra, ms_projects/other_initiatives/electricity/docs.cfm?varlan=english. Commission fo, r Environmental Cooperation 393, rue St-Jacques Ouest, Bureau 200 Montréal (QuÃ, ©bec) Canada H2Y 1N9 Tel: (514) 350-4300; Fax: (514) 350-4314 E-mail: info@ccemt, l.org ***********", emails$responsive[1], ## [1] 0, , emails$email[2], ## [1] "FYI -----Original Message----- From: \t\"Ginny Feliciano\" <gfeliciano@e, arthlink.net>@ENRON [mailto:IMCEANOTES-+22Ginny+20Feliciano+22+20+3Cgfeliciano+4,

[email protected]] Sent:\tThursday, June 28, 2001 3:40 PM To, :\tSilvia Woodard; Paul Runci; Katrin Thomas; John A. Riggs; Kurt E. Yeager; Gre

Page 17 :

aper on North America's electricity market Montreal, 27 November 2001 -- The Nor, th American Commission for Environmental Cooperation (CEC) is releasing a workin, g paper highlighting the trend towards increasing trade, competition and cross-b, order investment in electricity between Canada, Mexico and the United States. It, is hoped that the working paper, Environmental Challenges and Opportunities in t, he Evolving North American Electricity Market, will stimulate public discussion, around a CEC symposium of the same title about the need to coordinate environmen, tal policies trinationally as a North America-wide electricity market develops., The CEC symposium will take place in San Diego on 29-30 November, and will bring, together leading experts from industry, academia, NGOs and the governments of Ca, nada, Mexico and the United States to consider the impact of the evolving contin, ental electricity market on human health and the environment. \"Our goal [with t, he working paper and the symposium] is to highlight key environmental issues tha, t must be addressed as the electricity markets in North America become more and, more integrated,\" said Janine Ferretti, executive director of the CEC. \"We wan, t to stimulate discussion around the important policy questions being raised so, that countries can cooperate in their approach to energy and the environment.\", The CEC, an international organization created under an environmental side agree, ment to NAFTA known as the North American Agreement on Environmental Cooperation, , was established to address regional environmental concerns, help prevent poten, tial trade and environmental conflicts, and promote the effective enforcement of, environmental law. The CEC Secretariat believes that greater North American coop, eration on environmental policies regarding the continental electricity market i, s necessary to: * protect air quality and mitigate climate change, * minimize, the possibility of environment-based trade disputes, * ensure a dependable supp, ly of reasonably priced electricity across North America * avoid creation of po, llution havens, and * ensure local and national environmental measures remain e, ffective. The Changing Market The working paper profiles the rapid changing Nort, h American electricity market. For example, in 2001, the US is projected to expo, rt 13.1 thousand gigawatt-hours (GWh) of electricity to Canada and Mexico. By 20, 07, this number is projected to grow to 16.9 thousand GWh of electricity. \"Over, the past few decades, the North American electricity market has developed into a, complex array of cross-border transactions and relationships,\" said Phil Sharp,, former US congressman and chairman of the CEC's Electricity Advisory Board. \"We, need to achieve this new level of cooperation in our environmental approaches as, well.\" The Environmental Profile of the Electricity Sector The electricity sect, or is the single largest source of nationally reported toxins in the United Stat, es and Canada and a large source in Mexico. In the US, the electricity sector em, its approximately 25 percent of all NOx emissions, roughly 35 percent of all CO2, emissions, 25 percent of all mercury emissions and almost 70 percent of SO2 emis, sions. These emissions have a large impact on airsheds, watersheds and migratory, species corridors that are often shared between the three North American countri, es. \"We want to discuss the possible outcomes from greater efforts to coordinat, e federal, state or provincial environmental laws and policies that relate to th, e electricity sector,\" said Ferretti. \"How can we develop more compatible envi, ronmental approaches to help make domestic environmental policies more effective, ?\" The Effects of an Integrated Electricity Market One key issue raised in the, paper is the effect of market integration on the competitiveness of particular f, uels such as coal, natural gas or renewables. Fuel choice largely determines env, ironmental impacts from a specific facility, along with pollution control techno, logies, performance standards and regulations. The paper highlights other impact, s of a highly competitive market as well. For example, concerns about so called, \"pollution havens\" arise when significant differences in environmental laws or, enforcement practices induce power companies to locate their operations in juris, dictions with lower standards. \"The CEC Secretariat is exploring what additiona, l environmental policies will work in this restructured market and how these pol, icies can be adapted to ensure that they enhance competitiveness and benefit the, entire region,\" said Sharp. Because trade rules and policy measures directly in, fluence the variables that drive a successfully integrated North American electr, icity market, the working paper also addresses fuel choice, technology, pollutio, n control strategies and subsidies. The CEC will use the information gathered du, ring the discussion period to develop a final report that will be submitted to t, he Council in early 2002. For more information or to view the live video webcast, of the symposium, please go to: http://www.cec.org/electricity. You may download, the working paper and other supporting documents from: http://www.cec.org/progra, ms_projects/other_initiatives/electricity/docs.cfm?varlan=english. Commission fo, r Environmental Cooperation 393, rue St-Jacques Ouest, Bureau 200 Montréal (QuÃ

Page 20 :

##, , $ 1999, , : num, , 0 0 0 0 0 0 0 0 0 0 ..., , ##, , $ 2000, , : num, , 0 0 1 0 1 0 6 0 1 0 ..., , ##, , $ 2001, , : num, , 2 1 0 0 0 0 7 0 0 0 ..., , ##, , $ 713, , : num, , 0 0 0 0 0 0 0 0 0 0 ..., , ##, , $ 77002, , : num, , 0 0 0 0 0 0 0 0 0 0 ..., , ##, , $ abl, , : num, , 0 0 0 0 0 0 2 0 0 0 ..., , ##, , $ accept, , : num, , 0 0 0 0 0 0 1 0 0 0 ..., , ##, , $ access, , : num, , 0 0 0 0 0 0 0 0 0 0 ..., , ##, , $ accord, , : num, , 0 0 0 0 0 0 1 0 0 0 ..., , ##, , $ account, , : num, , 0 0 0 0 0 0 3 0 0 0 ..., , ##, , $ act, , : num, , 0 0 0 0 0 0 1 0 0 0 ..., , ##, , $ action, , : num, , 0 0 0 0 1 0 0 0 0 0 ..., , ##, , $ activ, , : num, , 0 0 1 0 1 0 1 0 0 0 ..., , ##, , $ actual, , : num, , 0 0 0 0 0 0 0 0 0 0 ..., , ##, , $ add, , : num, , 0 0 0 0 0 0 1 0 0 0 ..., , ##, , $ addit, , : num, , 1 0 0 0 0 0 1 0 0 0 ..., , ##, , $ address, , : num, , 3 0 0 0 2 0 0 0 0 1 ..., , ##, , $ administr, , : num, , 0 0 0 0 0 0 1 0 0 0 ..., , ##, , $ advanc, , : num, , 0 0 0 0 0 0 0 0 0 0 ..., , ##, , $ advis, , : num, , 0 0 0 0 0 0 0 0 0 0 ..., , ##, , $ affect, , : num, , 0 0 0 0 2 0 0 0 0 0 ..., , ##, , $ afternoon, , : num, , 0 0 0 0 0 0 0 0 0 0 ..., , ##, , $ agenc, , : num, , 0 0 0 0 1 0 0 0 0 0 ..., , ##, , $ ago, , : num, , 0 0 0 0 0 0 1 0 0 0 ..., , ##, , $ agre, , : num, , 0 0 0 0 0 0 0 0 0 0 ..., , ##, , $ agreement, , : num, , 2 0 0 0 2 0 1 0 0 1 ..., , ##, , $ alan, , : num, , 0 0 0 0 0 1 0 0 0 0 ..., , ##, , $ allow, , : num, , 0 0 0 0 0 0 2 0 0 0 ..., , ##, , $ along, , : num, , 1 0 0 0 1 0 1 0 0 0 ..., , ##, , $ alreadi, , : num, , 0 0 0 0 0 0 0 0 0 0 ..., , ##, , $ also, , : num, , 1 0 0 0 0 0 8 0 0 0 ..., , ##, , $ altern, , : num, , 0 0 0 0 0 0 0 0 1 0 ..., , ##, , $ although, , : num, , 0 0 0 0 0 0 6 0 0 0 ..., , ##, , $ amend, , : num, , 0 0 0 0 0 0 0 0 0 0 ..., , ##, , $ america, , : num, , 4 0 0 0 0 0 0 0 1 0 ..., , ##, , $ among, , : num, , 0 0 0 0 0 0 3 0 0 0 ..., , ##, , $ amount, , : num, , 0 0 0 0 0 0 1 0 0 0 ..., , ##, , $ analysi, , : num, , 0 0 0 2 0 0 0 0 0 0 ..., , ##, , $ analyst, , : num, , 0 0 0 0 0 0 6 0 0 0 ..., , ##, , $ andor, , : num, , 0 0 0 0 0 0 0 0 0 0 ..., , ##, , $ andrew, , : num, , 0 0 0 0 0 0 0 0 0 0 ...

Page 21 :

##, , $ announc, , : num, , 0 0 0 0 0 0 2 0 0 0 ..., , ##, , $ anoth, , : num, , 0 0 0 0 0 0 6 0 0 0 ..., , ##, , $ answer, , : num, , 0 0 0 0 0 0 2 0 0 0 ..., , ##, , $ anyon, , : num, , 0 0 0 0 0 0 0 0 0 0 ..., , ##, , $ anyth, , : num, , 0 0 0 0 0 0 0 0 0 0 ..., , ##, , $ appear, , : num, , 0 0 0 0 0 0 3 0 0 0 ..., , ##, , $ appli, , : num, , 0 0 0 0 0 0 0 0 0 0 ..., , ##, , $ applic, , : num, , 0 0 0 0 0 0 0 0 0 0 ..., , ##, , $ appreci, , : num, , 0 0 0 0 1 0 0 0 0 0 ..., , ##, , $ approach, , : num, , 3 0 0 0 0 0 1 0 0 0 ..., , ##, , $ appropri, , : num, , 0 0 0 0 0 0 0 1 0 0 ..., , ##, , $ approv, , : num, , 0 0 0 0 0 0 1 0 0 0 ..., , ##, , $ approxim, , : num, , 1 0 0 0 0 0 1 0 0 0 ..., , ##, , $ april, , : num, , 0 0 0 0 0 0 3 0 0 0 ..., , ##, , $ area, , : num, , 0 0 0 0 1 0 3 0 0 0 ..., , ##, , $ around, , : num, , 2 0 0 0 0 0 1 0 0 0 ..., , ##, , $ arrang, , : num, , 0 0 0 0 0 0 0 0 0 0 ..., , ##, , $ articl, , : num, , 0 0 0 0 0 0 1 0 0 0 ..., , ##, , $ ask, , : num, , 0 0 0 0 0 1 0 0 0 0 ..., , ##, , $ asset, , : num, , 0 0 0 0 0 0 2 0 0 0 ..., , ##, , $ assist, , : num, , 0 0 0 0 0 0 0 0 0 0 ..., , ##, , $ associ, , : num, , 0 0 1 0 1 0 0 0 0 0 ..., , ##, , $ assum, , : num, , 0 0 0 0 0 1 0 0 0 0 ..., , ##, , $ attach, , : num, , 0 1 0 1 1 0 1 0 3 1 ..., , ##, , $ attend, , : num, , 0 0 0 0 0 0 0 0 1 0 ..., , ##, , $ attent, , : num, , 0 0 0 0 0 0 1 0 0 0 ..., , ##, , $ attorney, , : num, , 0 0 0 0 0 0 0 0 0 0 ..., , ##, , $ august, , : num, , 0 0 0 0 0 0 0 0 0 0 ..., , ##, , $ author, , : num, , 0 0 1 0 0 0 0 0 0 0 ..., , ##, , $ avail, , : num, , 0 0 0 0 0 0 0 0 0 0 ..., , ##, , $ averag, , : num, , 0 0 0 0 0 0 5 0 0 0 ..., , ##, , $ avoid, , : num, , 1 0 0 0 1 0 2 0 0 0 ..., , ##, , $ awar, , : num, , 0 0 0 0 0 0 0 0 0 0 ..., , ##, , $ back, , : num, , 0 0 0 0 1 1 1 0 0 0 ..., , ##, , $ balanc, , : num, , 0 0 0 0 0 0 0 0 0 0 ..., , ##, , $ bank, , : num, , 0 0 0 0 0 0 2 0 0 0 ..., , ##, , $ base, , : num, , 0 0 0 0 1 0 9 0 0 0 ..., , ##, , $ basi, , : num, , 0 0 0 0 0 0 1 0 0 0 ..., , ##, , $ becom, , : num, , 1 0 0 0 0 0 4 0 0 0 ..., , ##, , $ begin, , : num, , 0 0 0 0 0 0 0 0 0 0 ..., , ##, , $ believ, , : num, , 1 0 0 0 0 0 0 0 0 0 ...

Page 22 :

##, , $ benefit, , : num, , 1 0 0 0 0 0 5 0 0 0 ..., , ##, , $ best, , : num, , 0 0 0 0 0 0 0 0 0 1 ..., , ##, , $ better, , : num, , 0 0 0 0 0 0 2 0 0 0 ..., , ##, , $ bid, , : num, , 0 0 0 0 0 0 1 0 0 0 ..., , ##, , $ big, , : num, , 0 0 0 0 0 1 6 0 0 0 ..., , ##, , $ bill, , : num, , 0 0 0 0 0 0 0 0 0 0 ..., , ##, , $ billion, , : num, , 0 0 0 0 0 0 2 0 0 0 ..., , ##, , $ bit, , : num, , 0 0 0 0 0 1 2 0 0 0 ..., , ##, , $ board, , : num, , 1 0 0 0 0 0 0 0 0 0 ..., , ##, , $ bob, , : num, , 0 0 0 0 0 0 0 0 0 0 ..., , ##, , $ book, , : num, , 0 0 0 0 0 0 0 0 0 0 ..., , ##, , $ brian, , : num, , 0 1 0 0 0 0 0 0 0 0 ..., , ##, , $ brief, , : num, , 0 0 0 0 0 0 0 0 0 0 ..., , ##, , $ bring, , : num, , 1 0 0 0 0 0 2 0 0 0 ..., , ##, , $ build, , : num, , 0 0 0 0 0 0 7 0 1 0 ..., , ##, , [list output truncated], , Split the data, # Split the data into training and testing sets, library(caTools), set.seed(144), spl = sample.split(labeledTerms$responsive, 0.7), train = subset(labeledTerms, spl == TRUE), test = subset(labeledTerms, spl == FALSE), , Build a CART model, # Build a CART Model, library(rpart), library(rpart.plot), emailCART = rpart(responsive~., data=train, method="class"), # Plot the CART MODEL, prp(emailCART)

Page 23 :

Make predictions on the test set, # Predict using the CART model on the test set, pred = predict(emailCART, newdata=test), pred[1:10,], ##, , 0, , 1, , ## 2, , 0.2156863 0.78431373, , ## 5, , 0.9557522 0.04424779, , ## 11 0.9557522 0.04424779, ## 13 0.8125000 0.18750000, ## 28 0.4000000 0.60000000, ## 37 0.9557522 0.04424779, ## 47 0.9557522 0.04424779, ## 58 0.9557522 0.04424779, ## 61 0.1250000 0.87500000, ## 62 0.1250000 0.87500000, pred.prob = pred[,2], , Compute accuracy, # Tabulate the responsive in the test set vs predictions

Page 24 :

a = table(test$responsive, pred.prob >= 0.5), kable(a), , FALSE, , TRUE, , 0, , 195, , 20, , 1, , 17, , 25, , # Compute Accuracy, sum(diag(a))/sum(a), ## [1] 0.8560311, (195+25)/(195+25+17+20), ## [1] 0.8560311, , Baseline model accuracy, However, as in most document retrieval applications, there are uneven costs for different types of errors here., Typically, a human will still have to manually review all of the predicted responsive documents to make sure they, are actually responsive. Therefore, if we have a false positive, in which a non-responsive document is labeled as, responsive, the mistake translates to a bit of additional work in the manual review process but no further harm,, since the manual review process will remove this erroneous result. But on the other hand, if we have a false, negative, in which a responsive document is labeled as non-responsive by our model, we will miss the document, entirely in our predictive coding process. Therefore, we’re going to assign a higher cost to false negatives than to, false positives, which makes this a good time to look at other cut-offs on our ROC curve., # Tabulate the responsive in the test set, a = table(test$responsive), kable(a), , Var1, 0, 1, # Compute accuracy, a[1]/sum(a), ##, , 0, , ## 0.8365759, 215/(215+42), ## [1] 0.8365759, , ROC curve

Page 25 :

Now, of course, the best cutoff to select is entirely dependent on the costs assigned by the decision maker to, false positives and true positives. However, again, we do favor cutoffs that give us a high sensitivity. We want to, identify a large number of the responsive documents, where we have a true positive rate of around 70%,, meaning that we’re getting about 70% of all the responsive documents, and a false positive rate of about 20%,, meaning that we’re making mistakes and accidentally identifying as responsive 20% of the non-responsive, documents. Now, the vast majority of documents are non-responsive, operating at this cutoff would result,, perhaps, in a large decrease in the amount of manual effort needed in the eDiscovery process. And we can see, from the blue color of the plot at this particular location that we’re looking at a threshold around maybe 0.15 or, so, significantly lower than 50%, which is definitely what we would expect since we favor false positives to false, negatives., library(ROCR), # Plot the ROC curve, predROCR = prediction(pred.prob, test$responsive), , perfROCR = performance(predROCR, "tpr", "fpr"), , plot(perfROCR, colorize=TRUE), , Compute AUC, Lastly, we can use the ROCR package to compute our AUC value. Call the performance function with our, prediction object, this time extracting the AUC value and just grabbing the y value slot of it. We can see that we, have an AUC in the test set of 79.4%, which means that our model can differentiate between a randomly, selected responsive and non-responsive document about 80% of the time., # Calculate AUC

Page 26 :

performance(predROCR, "auc")@y.values, ## [[1]], ## [1] 0.7936323, , Analytics Edge: Unit 5 - Detecting, Vandalism on Wikipedia, Sulman Khan, October 27, 2018, , Background Information on the Dataset, Wikipedia is a free online encyclopedia that anyone can edit and contribute to. It is available in many languages, and is growing all the time. On the English language version of Wikipedia:, •, •, •, , There are currently 4.7 million pages., There have been a total over 760 million edits (also called revisions) over its lifetime., There are approximately 130,000 edits per day., , One of the consequences of being editable by anyone is that some people vandalize pages. This can take the, form of removing content, adding promotional or inappropriate content, or more subtle shifts that change the, meaning of the article. With this many articles and edits per day it is difficult for humans to detect all instances of, vandalism and revert (undo) them. As a result, Wikipedia uses bots - computer programs that automatically, revert edits that look like vandalism. In this assignment we will attempt to develop a vandalism detector that uses, machine learning to distinguish between a valid edit and vandalism., The data for this problem is based on the revision history of the page Language. Wikipedia provides a history for, each page that consists of the state of the page at each revision. Rather than manually considering each, revision, a script was run that checked whether edits stayed or were reverted. If a change was eventually, reverted then that revision is marked as vandalism. This may result in some misclassifications, but the script, performs well enough for our needs., As a result of this preprocessing, some common processing tasks have already been done, including lowercasing and punctuation removal. The columns in the dataset are:, •, •, •, •, •, , Vandal = 1 if this edit was vandalism, 0 if not., Minor = 1 if the user marked this edit as a “minor edit”, 0 if not., Loggedin = 1 if the user made this edit while using a Wikipedia account, 0 if they did not., Added = The unique words added., Removed = The unique words removed., , Notice the repeated use of unique. The data we have available is not the traditional bag of words - rather it is the, set of words that were removed or added. For example, if a word was removed multiple times in a revision it will, only appear one time in the “Removed” column., , R Exercises, Loading the Dataset, Load the data wiki.csv with the option stringsAsFactors=FALSE, calling the data frame “wiki”. Convert the, “Vandal” column to a factor using the command wiki$Vandal = as.factor(wiki$Vandal)., # Load the dataset

Page 27 :

wiki = read.csv("wiki.csv", stringsAsFactors=FALSE), # Convert the column to a factor, wiki$Vandal = as.factor(wiki$Vandal), , How many cases of vandalism were detected in the history of this page?, # Tabulates the vandalism cases, table(wiki$Vandal), ##, ##, , 0, , 1, , ## 2061 1815, , There are 1815 observations with value 1, which denotes vandalism., , How many terms appear in dtmAdded?, We will now use the bag of words approach to build a model. We have two columns of textual data, with different, meanings. For example, adding rude words has a different meaning to removing rude words. We’ll start like we, did in class by building a document term matrix from the Added column. The text already is lowercase and, stripped of punctuation. So to pre-process the data, just complete the following four steps:, 1. Create the corpus for the Added column, and call it “corpusAdded”., 2. Remove the English-language stopwords., 3. Stem the words., 4. Build the DocumentTermMatrix, and call it dtmAdded., If the code length(stopwords(“english”)) does not return 174 for you, then please run the line of code in this file,, which will store the standard stop words in a variable called sw. When removing stop words, use, tm_map(corpusAdded, removeWords, sw) instead of tm_map(corpusAdded, removeWords,, stopwords(“english”))., sw = c(“i”, “me”, “my”, “myself”, “we”, “our”, “ours”, “ourselves”, “you”, “your”, “yours”, “yourself”, “yourselves”,, “he”, “him”, “his”, “himself”, “she”, “her”, “hers”, “herself”, “it”, “its”, “itself”, “they”, “them”, “their”, “theirs”,, “themselves”, “what”, “which”, “who”, “whom”, “this”, “that”, “these”, “those”, “am”, “is”, “are”, “was”, “were”, “be”,, “been”, “being”, “have”, “has”, “had”, “having”, “do”, “does”, “did”, “doing”, “would”, “should”, “could”, “ought”,, “i’m”, “you’re”, “he’s”, “she’s”, “it’s”, “we’re”, “they’re”, “i’ve”, “you’ve”, “we’ve”, “they’ve”, “i’d”, “you’d”, “he’d”,, “she’d”, “we’d”, “they’d”, “i’ll”, “you’ll”, “he’ll”, “she’ll”, “we’ll”, “they’ll”, “isn’t”, “aren’t”, “wasn’t”, “weren’t”, “hasn’t”,, “haven’t”, “hadn’t”, “doesn’t”, “don’t”, “didn’t”, “won’t”, “wouldn’t”, “shan’t”, “shouldn’t”, “can’t”, “cannot”, “couldn’t”,, “mustn’t”, “let’s”, “that’s”, “who’s”, “what’s”, “here’s”, “there’s”, “when’s”, “where’s”, “why’s”, “how’s”, “a”, “an”,, “the”, “and”, “but”, “if”, “or”, “because”, “as”, “until”, “while”, “of”, “at”, “by”, “for”, “with”, “about”, “against”,, “between”, “into”, “through”, “during”, “before”, “after”, “above”, “below”, “to”, “from”, “up”, “down”, “in”, “out”, “on”,, “off”, “over”, “under”, “again”, “further”, “then”, “once”, “here”, “there”, “when”, “where”, “why”, “how”, “all”, “any”,, “both”, “each”, “few”, “more”, “most”, “other”, “some”, “such”, “no”, “nor”, “not”, “only”, “own”, “same”, “so”, “than”,, “too”, “very”), library(tm), # Create the corpus for the Added column, and call it "corpusAdded", corpusAdded = VCorpus(VectorSource(wiki$Added)), # Remove the English-language stopwords, corpusAdded = tm_map(corpusAdded, removeWords, stopwords("english")), # Stem the words, corpusAdded = tm_map(corpusAdded, stemDocument), # Build the DocumentTermMatrix, and call it dtmAdded, dtmAdded = DocumentTermMatrix(corpusAdded)

Page 29 :

ncol(wordsRemoved), ## [1] 162, , 162 words are in wordsRemoved., , What is the accuracy on the test set of a baseline method that always predicts “not vandalism”, (the most frequent outcome)?, Combine the two data frames into a data frame called wikiWords with the following line of code:, wikiWords = cbind(wordsAdded, wordsRemoved), The cbind function combines two sets of variables for the same observations into one data frame. Then add the, Vandal column (HINT: remember how we added the dependent variable back into our data frame in the Twitter, lecture). Set the random seed to 123 and then split the data set using sample.split from the “caTools” package to, put 70% in the training set., # Combine the data frame, wikiWords = cbind(wordsAdded, wordsRemoved), wikiWords$Vandal = wiki$Vandal, # Split the dataset into a training and testing set, library(caTools), set.seed(123), spl = sample.split(wikiWords$Vandal, SplitRatio = 0.7), wikiTrain = subset(wikiWords, spl==TRUE), wikiTest = subset(wikiWords, spl==FALSE), # Tabulates the amount of vandalism in cases, a = table(wikiTest$Vandal), kable(a), , Var1, , Freq, , 0, , 618, , 1, , 545, # Computes the Accuracy, a[1]/(sum(a)), ##, , 0, , ## 0.5313844, , Testing Set Accuracy = 0.5313844, , Build a CART Model, Build a CART model to predict Vandal, using all of the other variables as independent variables. Use the training, set to build the model and the default parameters (don’t set values for minbucket or cp)., What is the accuracy of the model on the test set, using a threshold of 0.5? (Remember that if you add the, argument type=“class” when making predictions, the output of predict will automatically use a threshold of 0.5.)

Page 31 :

Test Set Accuracy = 0.544282, , New Approach: Identifying a key class of words, We weren’t able to improve on the baseline using the raw textual information. More specifically, the words, themselves were not useful. There are other options though, and in this section we will try two techniques identifying a key class of words, and counting words., The key class of words we will use are website addresses. “Website addresses” (also known as URLs - Uniform, Resource Locators) are comprised of two main parts. An example would be “http://www.google.com”. The first, part is the protocol, which is usually “http” (HyperText Transfer Protocol). The second part is the address of the, site, e.g. “www.google.com”. We have stripped all punctuation so links to websites appear in the data as one, word, e.g. “httpwwwgooglecom”. We hypothesize that given that a lot of vandalism seems to be adding links to, promotional or irrelevant websites, the presence of a web address is a sign of vandalism., We can search for the presence of a web address in the words added by searching for “http” in the Added, column. The grepl function returns TRUE if a string is found in another string, e.g., grepl(“cat”,“dogs and cats”,fixed=TRUE) # TRUE, grepl(“cat”,“dogs and rats”,fixed=TRUE) # FALSE, Create a copy of your dataframe from the previous question:, wikiWords2 = wikiWords, Make a new column in wikiWords2 that is 1 if “http” was in Added:, wikiWords2$HTTP = ifelse(grepl(“http”,wiki$Added,fixed=TRUE), 1, 0), # Create a copy of your data frame with http added, wikiWords2 = wikiWords, wikiWords2$HTTP = ifelse(grepl("http",wiki$Added,fixed=TRUE), 1, 0), # Tabulates the amount of words with http, z = table(wikiWords2$HTTP), kable(z), , Var1, 0, 1, 217 revisions added a link., , CART Model #2, What is the new accuracy of the CART model on the test set, using a, threshold of 0.5?, #Subsetting the data into training and test sets, wikiTrain2 = subset(wikiWords2, spl==TRUE), wikiTest2 = subset(wikiWords2, spl==FALSE), # Create the CART model, wikiCART2 = rpart(Vandal ~ ., data=wikiTrain2, method="class"), prp(wikiCART2)

Page 32 :

# Predict using the test set, testPredictCART2 = predict(wikiCART2, newdata=wikiTest2, type="class"), # Tabulate the predictions vs testing set data, a = table(wikiTest2$Vandal, testPredictCART2), kable(a), , 0, , 1, , 0, , 605, , 13, , 1, , 481, , 64, , # Computes the Accuracy, sum(diag(a))/(sum(a)), ## [1] 0.5752365, , Accuracy = 0.5752365, , New Approach: Counting Words, Another possibility is that the number of words added and removed is predictive, perhaps more so than the, actual words themselves. We already have a word count available in the form of the document-term matrices, (DTMs)., Sum the rows of dtmAdded and dtmRemoved and add them as new variables in your data frame wikiWords2, (called NumWordsAdded and NumWordsRemoved) by using the following commands:

Page 34 :

# Predict using the testing set, testPredictCART3 = predict(wikiCART3, newdata=wikiTest3, type="class"), # Tabulates the testing set vs the predictions, a = table(wikiTest3$Vandal, testPredictCART3), kable(a), , 0, , 1, , 0, , 514, , 104, , 1, , 297, , 248, , # Computes the Accuracy, sum(diag(a))/(sum(a)), ## [1] 0.6552021, , Accuracy = 0.6552021 #### Final Approach: Metadata - CART Model #4, We have two pieces of “metadata” (data about data) that we haven’t yet used. Make a copy of wikiWords2, and, call it wikiWords3:, wikiWords3 = wikiWords2, Then add the two original variables Minor and Loggedin to this new data frame:, wikiWords3$Minor = wiki$Minor, wikiWords3$Loggedin = wiki$Loggedin, In problem 1.5, you computed a vector called “spl” that identified the observations to put in the training and, testing sets. Use that variable (do not recompute it with sample.split) to make new training and testing sets with, wikiWords3., Build a CART model using all the training data. What is the accuracy of the model on the test set?, # Create a copy of the data and then add the two original variables, wikiWords3 = wikiWords2, wikiWords3$Minor = wiki$Minor, wikiWords3$Loggedin = wiki$Loggedin, # Subset the training and test sets, wikiTrain4 = subset(wikiWords3, spl==TRUE), wikiTest4 = subset(wikiWords3, spl==FALSE), # Create the CART Model, wikiCART4 = rpart(Vandal ~ ., data=wikiTrain4, method="class"), prp(wikiCART4)

Page 35 :

# Make predictions using the CART Model, testPredictCART4 = predict(wikiCART4, newdata=wikiTest4, type="class"), # Tabulate the testing set with the predictions, a = table(wikiTest4$Vandal, testPredictCART4), kable (a), , 0, , 1, , 0, , 595, , 23, , 1, , 304, , 241, , # Computes the accuracy, sum(diag(a))/(sum(a)), ## [1] 0.7188306, , Accuracy = 0.7188306, , Analytics Edge: Unit 5 - Automating, Reviews in Medicine

Page 36 :

Sulman Khan, October 27, 2018, , Background Information on the Dataset, The medical literature is enormous. Pubmed, a database of medical publications maintained by the U.S., National Library of Medicine, has indexed over 23 million medical publications. Further, the rate of medical, publication has increased over time, and now there are nearly 1 million new publications in the field each year,, or more than one per minute., The large size and fast-changing nature of the medical literature has increased the need for reviews, which, search databases like Pubmed for papers on a particular topic and then report results from the papers found., While such reviews are often performed manually, with multiple people reviewing each search result, this is, tedious and time consuming. In this problem, we will see how text analytics can be used to automate the, process of information retrieval., The dataset consists of the titles (variable title) and abstracts (variable abstract) of papers retrieved in a Pubmed, search. Each search result is labeled with whether the paper is a clinical trial testing a drug therapy for cancer, (variable trial). These labels were obtained by two people reviewing each search result and accessing the actual, paper if necessary, as part of a literature review of clinical trials testing drug therapies for advanced and, metastatic breast cancer., , R Exercises, Loading the Dataset, Load clinical_trial.csv into a data frame called trials (remembering to add the argument, stringsAsFactors=FALSE), and investigate the data frame with summary() and str()., , How many characters are there in the longest abstract? (Longest here is defined as the, abstract with the largest number of characters.), # Load the dataset, trials = read.csv("clinical_trial.csv", stringsAsFactors=FALSE), # Outputs the longest abstract, max(nchar(trials$abstract)), ## [1] 3708, , 3708 characters are in the longest abstract., , How many search results provided no abstract? (HINT: A search result provided no abstract if, the number of characters in the abstract field is zero.), # Tabulates the amount of results with no abstracts, z = table(nchar(trials$abstract)==0), kable(z), , Var1, , Freq, , FALSE, , 1748

Page 37 :

Var1, , Freq, , TRUE, , 112, , 112 search results have no abstract., , Find the observation with the minimum number of characters in the title (the variable “title”) out, of all of the observations in this dataset. What is the text of the title of this article? Include, capitalization and punctuation in your response, but don’t include the quotes., # Find the observation with the minimum number of characters, which.min(nchar(trials$title)), ## [1] 1258, a = which.min(nchar(trials$title)), z = trials$title[a], kable(z), , x, A decade of letrozole: FACE., A decade of letrozole: FACE., , Preparing the Corpus, Because we have both title and abstract information for trials, we need to build two corpora instead of one., Name them corpusTitle and corpusAbstract., Following the commands from lecture, perform the following tasks (you might need to load the “tm” package first, if it isn’t already loaded). Make sure to perform them in this order., 1. Convert the title variable to corpusTitle and the abstract variable to corpusAbstract., 2. Convert corpusTitle and corpusAbstract to lowercase., 3. Remove the punctuation in corpusTitle and corpusAbstract., 4. Remove the English language stop words from corpusTitle and corpusAbstract., 5. Stem the words in corpusTitle and corpusAbstract (each stemming might take a few minutes)., 6. Build a document term matrix called dtmTitle from corpusTitle and dtmAbstract from corpusAbstract., 7. Limit dtmTitle and dtmAbstract to terms with sparseness of at most 95% (aka terms that appear in at, least 5% of documents)., 8. Convert dtmTitle and dtmAbstract to data frames (keep the names dtmTitle and dtmAbstract)., If the code length(stopwords(“english”)) does not return 174 for you, then please run the line of code in this file,, which will store the standard stop words in a variable called sw. When removing stop words, use, tm_map(corpusTitle, removeWords, sw) and tm_map(corpusAbstract, removeWords, sw) instead of, tm_map(corpusTitle, removeWords, stopwords(“english”)) and tm_map(corpusAbstract, removeWords,, stopwords(“english”))., How many terms remain in dtmTitle after removing sparse terms (aka how many columns does it have)?, sw = c(“i”, “me”, “my”, “myself”, “we”, “our”, “ours”, “ourselves”, “you”, “your”, “yours”, “yourself”, “yourselves”,, “he”, “him”, “his”, “himself”, “she”, “her”, “hers”, “herself”, “it”, “its”, “itself”, “they”, “them”, “their”, “theirs”,

Page 39 :

# Limit dtmTitle and dtmAbstract to terms with sparseness of at most 95% (aka te, rms that appear in at least 5% of documents), dtmAbstract = DocumentTermMatrix(corpusAbstract), # Remove the sparse terms, dtmAbstract = removeSparseTerms(dtmAbstract, 0.95), dtmAbstract = as.data.frame(as.matrix(dtmAbstract)), colnames(dtmAbstract) = make.names(colnames(dtmAbstract)), ncol(dtmAbstract), ## [1] 335, , 31 terms remain in dtmTitle and 335 terms remain in dtmAbstract., , What is the most frequent word stem across all the abstracts? Hint: you can use colSums() to, compute the frequency of a word across all the abstracts., # OBtain the frequency of word stems, frequency <- colSums(dtmAbstract), which.max(frequency), ## patient, ##, , 212, , Patient is the most frequent word stem across all abstracts., , Building a model, We want to combine dtmTitle and dtmAbstract into a single data frame to make predictions. However, some of, the variables in these data frames have the same names. To fix this issue, run the following commands:, colnames(dtmTitle) = paste0(“T”, colnames(dtmTitle)), colnames(dtmAbstract) = paste0(“A”, colnames(dtmAbstract)), # Input A into Title and A into Abstracts, colnames(dtmTitle) = paste0("T", colnames(dtmTitle)), colnames(dtmAbstract) = paste0("A", colnames(dtmAbstract)), , How many columns are in this combined data frame?, # Combine the data frame, dtm = cbind(dtmTitle, dtmAbstract), ncol(dtm), ## [1] 366, # Remove dependent variable, dtm$trial = trials$trial, , 367 columns are in the combined data frame., , Baseline Model, Now that we have prepared our data frame, it’s time to split it into a training and testing set and to build, regression models. Set the random seed to 144 and use the sample.split function from the caTools package to, split dtm into data frames named “train” and “test”, putting 70% of the data in the training set.

Page 40 :

What is the accuracy of the baseline model on the training set? (Remember that the baseline model predicts the, most frequent outcome in the training set for all observations.), # Split the dataset into training and testing sets, library(caTools), set.seed(144), spl = sample.split(dtm$trial, SplitRatio = 0.7), train = subset(dtm, spl==TRUE), test = subset(dtm, spl==FALSE), # Tabulate the baseline model, table(dtm$trial), ##, ##, , 0, , 1, , ## 1043, , 817, , a = table(dtm$trial), kable(a), , Var1, , Freq, , 0, , 1043, , 1, , 817, a[1]/(sum(a)), ##, , 0, , ## 0.5607527, , The accuracy of the baseline model is 0.5607527., , CART Model #1 - Tree, Build a CART model called trialCART, using all the independent variables in the training set to train the model,, and then plot the CART model. Just use the default parameters to build the model (don’t add a minbucket or cp, value). Remember to add the method=“class” argument, since this is a classification problem., What is the name of the first variable the model split on?, # Implement the CART Model, library(rpart), library(rpart.plot), trialCART = rpart(trial, prp(trialCART), , ~ ., data=train, method="class")

Page 41 :

The first variable is Tphase., , CART Model #1 - Training(MAX), # Predicting on the raining set, predTrain <- predict(trialCART), summary(predTrain), ##, , 0, , 1, , ##, , Min., , :0.1281, , ##, , 1st Qu.:0.2177, , 1st Qu.:0.13636, , ##, , Median :0.7125, , Median :0.28750, , ##, , Mean, , Mean, , ##, , 3rd Qu.:0.8636, , 3rd Qu.:0.78231, , ##, , Max., , Max., , :0.5607, , :0.9455, , Min., , :0.05455, , :0.43932, , :0.87189, , The maximum predicted probability is 0.872, , CART Model #1 - Training, # Obtaining the accuracies of training set, t = table(train$trial, predTrain[,2]>=0.5), kable(t)

Page 42 :

FALSE, , TRUE, , 0, , 631, , 99, , 1, , 131, , 441, , (t[1] + t[4]) / nrow(train), ## [1] 0.8233487, t[4] / (t[4] + t[2]), ## [1] 0.770979, t[1] / (t[1] + t[3]), ## [1] 0.8643836, , Training Set Accuracy = 0.8233487 Training Set Sensitivity = 0.770979 Training Set Specificity = 0.8643836, , CART Model #1 - Test, # Predicting on the test set, testPredictCART = predict(trialCART, newdata=test, type="class"), # Tabulating the accuracy of the testpredic vs the testing set, a = table(test$trial, testPredictCART), kable(a), , 0, , 1, , 0, , 261, , 52, , 1, , 83, , 162, , sum(diag(a))/(sum(a)), ## [1] 0.7580645, , Testing Set Accuracy = 0.7580645, , ROCR testing set AUC, # Calculates the ROCR, library(ROCR), predTest = predict(trialCART, newdata=test)[,2], pred = prediction(predTest, test$trial), as.numeric(performance(pred, "auc")@y.values), ## [1] 0.8371063, , Testing Set AUC = 0.8371063

Page 43 :

Decision-maker tradeoffs, The decision maker for this problem, a researcher performing a review of the medical literature, would use a, model (like the CART one we built here) in the following workflow:, 1. For all of the papers retreived in the PubMed Search, predict which papers are clinical trials using the, model. This yields some initial Set A of papers predicted to be trials, and some Set B of papers predicted, not to be trials. (See the figure below.), 2. Then, the decision maker manually reviews all papers in Set A, verifying that each paper meets the, study’s detailed inclusion criteria (for the purposes of this analysis, we assume this manual review is, 100% accurate at identifying whether a paper in Set A is relevant to the study). This yields a more limited, set of papers to be included in the study, which would ideally be all papers in the medical literature, meeting the detailed inclusion criteria for the study., 3. Perform the study-specific analysis, using data extracted from the limited set of papers identified in step, 2., This process is shown in the figure below., , What is the cost associated with the model in Step 1 making a false negative prediction?, By definition, a false negative is a paper that should have been included in Set A but was missed by the model., This means a study that should have been included in Step 3 was missed, affecting the results., , What is the cost associated with the model in Step 1 making a false positive prediction?, By definition, a false positive is a paper that should not have been included in Set A but that was actually, included. However, because the manual review in Step 2 is assumed to be 100% effective, this extra paper will, not make it into the more limited set of papers, and therefore this mistake will not affect the analysis in Step 3., , Given the costs associated with false positives and false negatives, which of the following is, most accurate?, A false negative might negatively affect the results of the literature review and analysis, while a false positive is a, nuisance (one additional paper that needs to be manually checked). As a result, the cost of a false negative is, much higher than the cost of a false positive, so much so that many studies actually use no machine learning, (aka no Step 1) and have two people manually review each search result in Step 2. As always, we prefer a lower, threshold in cases where false negatives are more costly than false positives, since we will make fewer negative, predictions.

Page 44 :

Analytics Edge: Unit 5 - Separating, Spam from Ham (Part 1), Sulman Khan, October 27, 2018, , Background Information on the Dataset, Nearly every email user has at some point encountered a “spam” email, which is an unsolicited message often, advertising a product, containing links to malware, or attempting to scam the recipient. Roughly 80-90% of more, than 100 billion emails sent each day are spam emails, most being sent from botnets of malware-infected, computers. The remainder of emails are called “ham” emails., As a result of the huge number of spam emails being sent across the Internet each day, most email providers, offer a spam filter that automatically flags likely spam messages and separates them from the ham. Though, these filters use a number of techniques (e.g. looking up the sender in a so-called “Blackhole List” that contains, IP addresses of likely spammers), most rely heavily on the analysis of the contents of an email via text analytics., In this homework problem, we will build and evaluate a spam filter using a publicly available dataset first, described in the 2006 conference paper “Spam Filtering with Naive Bayes – Which Naive Bayes?” by V. Metsis,, I. Androutsopoulos, and G. Paliouras. The “ham” messages in this dataset come from the inbox of former Enron, Managing Director for Research Vincent Kaminski, one of the inboxes in the Enron Corpus. One source of spam, messages in this dataset is the SpamAssassin corpus, which contains hand-labeled spam messages contributed, by Internet users. The remaining spam was collected by Project Honey Pot, a project that collects spam, messages and identifies spammers by publishing email address that humans would know not to contact but that, bots might target with spam. The full dataset we will use was constructed as roughly a 75/25 mix of the ham and, spam messages., The dataset contains just two fields:, •, •, , text: The text of the email., spam: A binary variable indicating if the email was spam., , R Exercises, Loading the Dataset, Begin by loading the dataset emails.csv into a data frame called emails. Remember to pass the, stringsAsFactors=FALSE option when loading the data., emails = read.csv("emails.csv", stringsAsFactors=FALSE), , How many emails are in the dataset?, # Examine the string emails, str(emails), ## 'data.frame':, , 5728 obs. of, , 2 variables:, , ## $ text: chr "Subject: naturally irresistible your corporate identity lt is, really hard to recollect a company : the market"| __truncated__ "Subject: the s, tock trading gunslinger fanny is merrill but muzo not colza attainder and penul, timate like esmar"| __truncated__ "Subject: unbelievable new homes made easy im, wanting to show you this homeowner you have been pre - approved"| __truncated_, _ "Subject: 4 color printing special request additional information now ! click, here click here for a printable "| __truncated__ ...

Page 45 :

##, , $ spam: int, , 1 1 1 1 1 1 1 1 1 1 ..., , 5728 emails in the dataset., , How many of the emails are spam?, # Tabulates the amount of emails are spam, table(emails$spam), ##, ##, , 0, , 1, , ## 4360 1368, , 1368 emails are spam., , Which word appears at the beginning of every email in the dataset? Respond as a lower-case, word with punctuation removed., # Examine the string emails that are text, str(emails$text[2]), ## chr "Subject: the stock trading gunslinger fanny is merrill but muzo not co, lza attainder and penultimate like esmar"| __truncated__, , Subject appears at the beginning of every email in the dataset., , Could a spam classifier potentially benefit from including the frequency of the word that, appears in every email?, We know that each email has the word “subject” appear at least once, but the frequency with which it appears, might help us differentiate spam from ham. For instance, a long email chain would have the word “subject”, appear a number of times, and this higher frequency might be indicative of a ham message., , How many characters are in the longest email in the dataset (where longest is measured in, terms of the maximum number of characters)?, max(nchar(emails$text)), ## [1] 43952, , 43952 characters are in the longest email., , Which row contains the shortest email in the dataset? (Just like in the previous problem,, shortest is measured in terms of the fewest number of characters.), # Finds the row with the shortest email, which.min(nchar(emails$text)), ## [1] 1992, , The 1992 row contains the shortest email in the dataset., , Preparing the Corpus:, 1. Build a new corpus variable called corpus., 2. Using tm_map, convert the text to lowercase., 3. Using tm_map, remove all punctuation from the corpus., 4. Using tm_map, remove all English stopwords from the corpus.

Page 46 :

5. Using tm_map, stem the words in the corpus., 6. Build a document term matrix from the corpus, called dtm., If the code length(stopwords(“english”)) does not return 174 for you, then please run the line of code in this file,, which will store the standard stop words in a variable called sw. When removing stop words, use, tm_map(corpus, removeWords, sw) instead of tm_map(corpus, removeWords, stopwords(“english”))., , How many terms are in dtm?, # Preparing the Corpus, library(tm), # Build a new corpus variable called corpus, corpus = VCorpus(VectorSource(emails$text)), # Convert the text to lowercase., corpus = tm_map(corpus, content_transformer(tolower)), # Remove all punctuation from the corpus, corpus = tm_map(corpus, removePunctuation), # Remove all English stopwords from the corpus, corpus = tm_map(corpus, removeWords, stopwords("english")), # Stem the words in the corpus, corpus = tm_map(corpus, stemDocument), # Build a document term matrix from the corpus, called dtm, dtm = DocumentTermMatrix(corpus), dtm, ## <<DocumentTermMatrix (documents: 5728, terms: 28687)>>, ## Non-/sparse entries: 481719/163837417, ## Sparsity, , : 100%, , ## Maximal term length: 24, ## Weighting, , : term frequency (tf), , 28687 terms are in dtm., , To obtain a more reasonable number of terms, limit dtm to contain terms appearing in at least, 5% of documents, and store this result as spdtm (don’t overwrite dtm, because we will use it in, a later step of this homework). How many terms are in spdtm?, # Remove the sparse terms, spdtm = removeSparseTerms(dtm, 0.95), spdtm, ## <<DocumentTermMatrix (documents: 5728, terms: 330)>>, ## Non-/sparse entries: 213551/1676689, ## Sparsity, , : 89%, , ## Maximal term length: 10, ## Weighting, , 330 terms are in spdtm., , : term frequency (tf)

Page 47 :

Build a data frame called emailsSparse from spdtm, and use the make.names function to, make the variable names of emailsSparse valid. What is the word stem that shows up most, frequently across all the emails in the dataset?, # Build data frame called emailsSparse from spdtm, emailsSparse = as.data.frame(as.matrix(spdtm)), colnames(emailsSparse) = make.names(colnames(emailsSparse)), # Word stem that is frequent, frequency <- colSums(emailsSparse), which.max(frequency), ## enron, ##, , 92, , Enron is the most frequent word., , How many word stems appear at least 5000 times in the ham emails in the dataset? Hint: in, this and the next question, remember not to count the dependent variable we just added., # Add a variable called spam, emailsSparse$spam = emails$spam, # Sort the ham emails in dataset, a = sort((colSums(subset(emailsSparse, spam == 0)))), kable(a), , x, spam, life, , 0, 80, , remov, , 103, , money, , 114, , onlin, , 173, , without, , 191, , websit, , 194, , click, , 217, , special, , 226

Page 48 :

x, wish, , 229, , repli, , 239, , buy, , 243, , net, , 243, , link, , 247, , immedi, , 249, , done, , 254, , mean, , 259, , design, , 261, , lot, , 268, , effect, , 270, , info, , 273, , either, , 279, , read, , 279, , write, , 286, , line, , 289, , begin, , 291, , sorri, , 293, , success, , 293

Page 50 :

x, experi, , 346, , thing, , 347, , allow, , 348, , check, , 351, , due, , 351, , type, , 352, , happi, , 354, , return, , 355, , expect, , 356, , short, , 357, , effort, , 358, , open, , 360, , internet, , 361, , sincer, , 361, , public, , 364, , recent, , 368, , anoth, , 369, , alreadi, , 372, , home, , 375

Page 51 :

x, made, , 380, , respond, , 382, , given, , 383, , etc, , 385, , put, , 385, , within, , 386, , place, , 388, , right, , 390, , version, , 390, , hello, , 395, , sure, , 396, , area, , 397, , run, , 398, , arrang, , 399, , account, , 401, , join, , 403, , hour, , 404, , locat, , 406, , togeth, , 406

Page 52 :

x, engin, , 411, , import, , 411, , per, , 412, , corpor, , 414, , high, , 416, , result, , 418, , hear, , 420, , final, , 422, , deal, , 423, , applic, , 428, , even, , 429, , web, , 430, , custom, , 433, , soon, , 435, , long, , 436, , sinc, , 439, , futur, , 440, , member, , 446, , X000, , 447

Page 53 :

x, event, , 447, , don, , 450, , part, , 450, , feel, , 453, , tuesday, , 454, , wednesday, , 456, , still, , 457, , unit, , 457, , site, , 458, , X853, , 461, , continu, , 464, , understand, , 464, , resourc, , 466, , robert, , 466, , analysi, , 468, , form, , 468, , point, , 474, , assist, , 475, , confirm, , 485

Page 54 :

x, differ, , 489, , intern, , 489, , might, , 490, , real, , 490, , case, , 492, , howev, , 496, , comment, , 505, , abl, , 515, , complet, , 515, , rate, , 516, , appreci, , 518, , tri, , 521, , move, , 526, , updat, , 527, , approv, , 533, , suggest, , 533, , free, , 535, , contract, , 544, , detail, , 546

Page 55 :

x, morn, , 546, , end, , 550, , mani, , 550, , attend, , 558, , thursday, , 558, , direct, , 561, , requir, , 562, , cours, , 567, , person, , 569, , relat, , 573, , depart, , 575, , today, , 577, , start, , 580, , way, , 586, , mark, , 588, , valu, , 590, , problem, , 593, , peopl, , 599, , note, , 600

Page 56 :

x, school, , 607, , invit, , 614, , access, , 617, , term, , 625, , juli, , 630, , monday, , 630, , gibner, , 633, , base, , 635, , director, , 640, , offer, , 643, , cost, , 646, , addit, , 648, , kevin, , 654, , great, , 655, , set, , 658, , file, , 659, , find, , 665, , much, , 669, , oper, , 669

Page 58 :

x, plan, , 738, , back, , 739, , name, , 745, , come, , 748, , opportun, , 760, , report, , 772, , product, , 776, , two, , 787, , origin, , 796, , ask, , 797, , credit, , 798, , state, , 806, , system, , 816, , process, , 826, , hope, , 828, , london, , 828, , just, , 830, , receiv, , 830, , chang, , 831

Page 59 :

x, review, , 834, , current, , 841, , shall, , 844, , friday, , 847, , team, , 850, , phone, , 858, , issu, , 865, , data, , 868, , avail, , 872, , last, , 874, , good, , 876, , give, , 883, , www, , 897, , gas, , 905, , list, , 907, , posit, , 917, , visit, , 920, , includ, , 924, , resum, , 928

Page 61 :

x, want, , 1068, , question, , 1069, , program, , 1080, , think, , 1084, , X713, , 1097, , crenshaw, , 1115, , attach, , 1155, , trade, , 1167, , help, , 1168, , email, , 1201, , compani, , 1225, , request, , 1227, , see, , 1238, , communic, , 1251, , confer, , 1264, , discuss, , 1270, , make, , 1281, , contact, , 1301, , follow, , 1308

Page 62 :

x, interview, , 1320, , project, , 1328, , mail, , 1352, , present, , 1397, , busi, , 1416, , interest, , 1429, , option, , 1432, , day, , 1440, , call, , 1497, , one, , 1516, , year, , 1523, , week, , 1527, , messag, , 1538, , houston, , 1577, , also, , 1604, , look, , 1607, , edu, , 1620, , corp, , 1643, , shirley, , 1687

Page 63 :

x, develop, , 1691, , get, , 1768, , new, , 1777, , use, , 1784, , let, , 1856, , regard, , 1859, , inform, , 1883, , need, , 1890, , power, , 1972, , may, , 1976, , like, , 1980, , risk, , 2097, , energi, , 2124, , market, , 2150, , model, , 2170, , price, , 2191, , work, , 2293, , manag, , 2334, , know, , 2345

Page 64 :

x, group, , 2474, , meet, , 2544, , time, , 2552, , research, , 2752, , forward, , 2952, , X2001, , 3060, , can, , 3426, , thank, , 3558, , com, , 4444, , pleas, , 4494, , kaminski, , 4801, , X2000, , 4935, , hou, , 5569, , will, , 6802, , vinc, , 8531, , subject, , 8625, , ect, , 11417, , enron, , 13388, , 6 words stems appear at least 5000 times in the ham emails in the dataset., , How many word stems appear at least 1000 times in the spam emails in the dataset?, # Sort the spam emails in the dataset

Page 68 :

X, depart, , 46, , confirm, , 47, , respond, , 48, , school, , 48, , corp, , 49, , etc, , 49, , hear, , 49, , howev, , 49, , sorri, , 50, , idea, , 51, , energi, , 55, , discuss, , 56, , open, , 56, , option, , 56, , soon, , 57, , understand, , 57, , cours, , 59, , experi, , 59, , associ, , 62

Page 69 :

X, point, , 62, , bring, , 63, , director, , 65, , particip, , 65, , anoth, , 66, , join, , 66, , still, , 66, , final, , 68, , research, , 68, , case, , 69, , set, , 69, , specif, , 69, , given, , 70, , juli, , 71, , problem, , 73, , put, , 73, , alreadi, , 74, , ask, , 74, , abl, , 75

Page 70 :

X, deal, , 75, , fax, , 75, , book, , 76, , team, , 76, , issu, , 79, , locat, , 79, , meet, , 79, , updat, , 79, , lot, , 80, , sincer, , 80, , better, , 82, , short, , 82, , sinc, , 82, , done, , 83, , question, , 83, , recent, , 83, , possibl, , 84, , contract, , 85, , end, , 85

Page 71 :

X, move, , 86, , data, , 87, , might, , 87, , continu, , 88, , note, , 88, , feel, , 90, , resourc, , 90, , sever, , 90, , area, , 92, , communic, , 92, , realli, , 93, , due, , 94, , direct, , 96, , origin, , 96, , copi, , 97, , unit, , 97, , long, , 98, , member, , 99, , sure, , 99

Page 72 :

X, allow, , 102, , dear, , 104, , public, , 104, , write, , 104, , event, , 105, , let, , 107, , differ, , 109, , file, , 111, , involv, , 111, , respons, , 113, , creat, , 114, , type, , 114, , approv, , 115, , detail, , 115, , effort, , 115, , intern, , 117, , request, , 117, , say, , 118, , import, , 119

Page 73 :

X, support, , 120, , part, , 121, , relat, , 121, , assist, , 123, , last, , 124, , two, , 124, , back, , 125, , keep, , 125, , addit, , 126, , date, , 127, , place, , 128, , group, , 130, , mean, , 131, , valu, , 131, , think, , 132, , offic, , 133, , read, , 134, , immedi, , 136, , check, , 137

Page 74 :

X, applic, , 139, , hello, , 139, , tri, , 140, , review, , 142, , believ, , 143, , phone, , 143, , hour, , 144, , power, , 145, , present, , 146, , process, , 149, , corpor, , 151, , oper, , 151, , full, , 152, , return, , 154, , come, , 155, , sent, , 155, , opportun, , 158, , real, , 158, , repli, , 158

Page 75 :

X, line, , 159, , engin, , 160, , term, , 161, , credit, , 162, , well, , 164, , gas, , 165, , info, , 165, , plan, , 166, , next., , 170, , risk, , 170, , increas, , 171, , access, , 172, , give, , 172, , thank, , 172, , link, , 174, , requir, , 174, , version, , 174, , cost, , 175, , great, , 182

Page 76 :

X, wish, , 185, , regard, , 186, , posit, , 187, , thing, , 188, , call, , 190, , develop, , 191, , complet, , 192, , much, , 192, , even, , 193, , project, , 194, , design, , 196, , form, , 196, , expect, , 198, , person, , 198, , without, , 198, , buy, , 199, , trade, , 199, , effect, , 201, , rate, , 201

Page 77 :

X, base, , 202, , find, , 202, , current, , 203, , first, , 203, , chang, , 204, , visit, , 206, , financi, , 207, , high, , 208, , mani, , 208, , forward, , 209, , good, , 221, , special, , 225, , don, , 226, , success, , 226, , per, , 230, , number, , 231, , week, , 231, , result, , 237, , web, , 238

Page 78 :

X, industri, , 239, , contact, , 242, , made, , 242, , follow, , 244, , month, , 249, , right, , 249, , today, , 251, , also, , 260, , help, , 262, , internet, , 262, , manag, , 266, , know, , 269, , way, , 278, , avail, , 280, , state, , 280, , futur, , 282, , home, , 285, , start, , 300, , system, , 302

Page 79 :

X, take, , 304, , net, , 305, , includ, , 314, , life, , 320, , see, , 329, , name, , 344, , onlin, , 345, , within, , 346, , remov, , 357, , best, , 358, , program, , 358, , peopl, , 359, , custom, , 363, , year, , 367, , like, , 372, , interest, , 385, , send, , 393, , servic, , 395, , look, , 396

Page 80 :

X, work, , 415, , day, , 420, , want, , 420, , product, , 421, , www, , 426, , account, , 428, , provid, , 435, , need, , 438, , softwar, , 440, , messag, , 445, , site, , 455, , address, , 461, , may, , 489, , list, , 503, , price, , 503, , new, , 504, , websit, , 506, , report, , 507, , secur, , 520

Page 81 :

X, just, , 524, , offer, , 528, , invest, , 540, , order, , 541, , use, , 546, , click, , 552, , X000, , 560, , now, , 575, , one, , 592, , time, , 593, , http, , 600, , market, , 600, , make, , 603, , free, , 606, , pleas, , 619, , money, , 662, , get, , 694, , receiv, , 727, , inform, , 818

Page 82 :