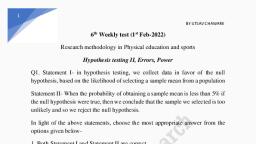

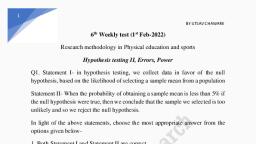

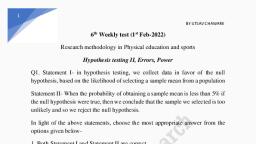

Notes of B.Sc.-III(Statistics), Statistical Inference-I 100 Statistical Tests - Study Material

Page 2 :

100 STATISTICAL TESTS

Page 4 :

100, STATISTICAL, TESTS, 3rd Edition, Gopal K. Kanji

Page 5 :

© Gopal K. Kanji 2006, First edition published 1993, reprinted 1993, Reprinted with corrections 1994, Reprinted 1995, 1997, New edition published 1999, Reprinted 2000, 2001, 2003 and 2005, Third edition published 2006, All rights reserved. No part of this publication may be reproduced, stored in a, retrieval system, transmitted or utilized in any form or by any means,, electronic, mechanical, photocopying, recording or otherwise, without, permission in writing from the Publishers., SAGE Publications Ltd, 1 Oliver’s Yard, 55 City Road, London, ECIY ISP, SAGE Publications Inc, 2455 Teller Road, Thousand Oaks, California 91320, SAGE Publications India Pvt Ltd, B-42 Panchsheel Enclave, PO Box 4190, New Delhi 110 017, British Library Cataloguing in Publication Data, A catalogue record for this book is available from the British Library, ISBN-10 1 4129 2375 1, ISBN-10 1 4129 2376 X, , ISBN-13 978 1 4129 2375 0, ISBN-13 978 1 4129 2376 7 (Pbk), , Library of Congress catalog card number 98-61738: 2005910188, Typeset by Newgen Imaging Systems (P) Ltd, Chennai, India., Printed in Great Britain by The Cromwell Press Ltd, Trowbridge, Wiltshire

Page 6 :

CONTENTS, Acknowledgements, , vi, , Preface, , vii, , List of Common Symbols, , viii, , Introduction to the Book, , 1, , Introduction to Statistical Testing, , 2, , Examples of Test Procedures, , 5, , List of Tests, , 14, , Classification of Tests, , 19, , The Tests, , 21, , List of Tables, , 185, , Tables, , 186, , References, , 240, , Index, , 241

Page 7 :

ACKNOWLEDGEMENTS, The author and publishers wish to thank the following for permission to use copyright, material:, The American Statistical Association for Table 16 adapted from Massey. F.J. Jr (1951), ‘The Kolmogorov–Smirnov test for goodness of fit’, Journal of the American Statistical, Association, 4(6). Copyright © 1951 by the American Statistical Association; the, Biometrika Trustees for Table 33 from Durbin, J. and Watson, G.S. (1951) ‘Testing for, serial correlation in least squares regression II’, Biometrika. 38, pp. 173–5; for Table, 36 from Stephens, M.A. (1964) ‘The distribution of the goodness of fit statistic, Un2 II’,, Biometrika, 51, pp. 393–7; for Table 3 from Pearson, E.S. and Hartley, H.O. (1970), Biometrika Tables for Statisticians, Vol. I, Cambridge University Press; for Table 12, from Merrington, M. and Thompson, CM. (1946) ‘Tables for testing the homogeneity of, a set of estimated variances’, Biometrika, 33, pp. 296–304; and for Table 7 from Geary,, R.E. and Pearson, E.S. (n.d.) ‘Tests of normality’; Harcourt Brace Jovanovich Ltd for, Tables 38 and 39 from Mardia, K.V. (1972) Statistics of Directional Data, Academic, Press; and Tables 35, 36 and 37 from Batschelet, E. (1981) Circular Statistics in Biology,, Academic Press; the Institute of Mathematical Statistics for Table 28 from Hart, B.I., (1942) ‘Significance levels for the ratio of the mean square successive difference to, the variance’, Annals of Mathematical Statistics, 13, pp. 445–7; and for Table 29 from, Anderson, R.L. (1942) ‘Distribution of the serial correlation coefficient’, Annals of, Mathematical Statistics, 13, pp. 1–13; Longman Group UK Ltd on behalf of the Literary, Executor of the late Sir Ronald A. Fisher, FRS and Dr Frank Yates FRS for Table 2, from Statistical Tables for Biological, Agricultural and Medical Research (6th edition,, 1974) Table IV; McGraw-Hill, Inc. for Tables 8, 15, 18 and 31 from Dixon, W.J., and Massey, F.J. Jr (1957) Introduction to Statistical Analysis; Macmillan Publishing, Company for Table l(a) from Walpole, R.E. and Myers, R.H. (1989) Probability and, Statistics for Engineers and Scientists, 4th edition, Table A.3. Copyright © 1989 by, Macmillan Publishing Company; Routledge for Tables 4 and 22 from Neave, H.R., (1978) Statistical Tables, Allen & Unwin; Springer-Verlag GmbH & Co. KG for Tables, 9, 10, 14, 19, 23, 26 and 32 from Sachs, L. (1972) Statistiche Auswertungsmethoden,, 3rd edition; TNO Institute of Preventive Health Care, Leiden, for Tables 6, 11, 13, 25,, 27 and 30 from De Jonge, H. (1963–4) Inleiding tot de Medische Statistiek, 2 vols, 3rd, edition, TNO Health Research., Every effort has been made to trace all the copyright holders, but if any have, been inadvertently overlooked the publishers will be pleased to make the necessary, arrangement at the first opportunity.

Page 8 :

PREFACE, Some twenty years ago, it was only necessary to know about a dozen statistical tests, in order to be a practising statistician, and these were all available in the few statistical, textbooks that existed at that time. In recent years the number of tests has grown, tremendously and, while modern books carry the more common tests, it is often quite, difficult for a practising statistician quickly to turn up a reference to some of the less, used but none the less important tests which are now in the literature. Accordingly, we, have attempted to collect together information on most commonly used tests which are, currently available and present it, together with a guide to further reading, to make a, useful reference book for both the applied statistician and the everyday user of statistics., Naturally, any such compilation must omit some tests through oversight, and the author, would be very pleased to hear from any reader about tests which they feel ought to have, been included., The work is divided into several sections. In the first we define a number of terms, used in carrying out statistical tests, we define the thinking behind statistical testing and, indicate how some of the tests can be linked together in an investigation. In the second, section we give examples of test procedures and in the third we provide a list of all the, 100 statistical tests. The fourth section classifies the tests under a variety of headings., This became necessary when we tried to arrange the tests in some logical sequence., Many such logical sequences are available and, to meet the possible needs of the reader,, these cross-reference lists have been provided. The main part of the work describes, most commonly used tests currently available to the working statistician. No attempts, at proof are given, but an elementary knowledge of statistics should be sufficient to, allow the reader to carry out the test. In every case the appropriate formulae are given, and where possible we have used schematic diagrams to preclude any ambiguities, in notation. Where there has been a conflict of notation between existing textbooks,, we have endeavoured to use the most commonly accepted symbols. The next section, provides a list of the statistical tables required for the tests followed by the tables, themselves, and the last section provides references for further information., Because we have brought together material which is spread over a large number, of sources, we feel that this work will provide a handy reference source, not only for, practising statisticians but also for teachers and students of statistics. We feel that no one, can remember details of all the tests described here. We have tried to provide not only, a memory jogger but also a first reference point for anyone coming across a particular, test with which he or she is unfamiliar., Lucidity of style and simplicity of expression have been our twin objectives, and, every effort has been made to avoid errors. Constructive criticism and suggestions will, help us in improving the book.

Page 9 :

COMMON SYMBOLS, Each test or method may have its own terminology and symbols but the following are, commonly used by all statisticians., n, K, , number of observations (sample size), number of samples (each having n elements), , α, v, σ, s, , level of significance, degrees of freedom, standard deviation (population), standard deviation (sample), , µ, x̄, ρ, r, , population mean, sample mean, population correlation coefficient, sample correlation coefficient, , Z, , standard normal deviate

Page 10 :

INTRODUCTION TO THE BOOK, This book presents a collection of statistical tests which can help experimenters and, researchers draw conclusions from a series of observational data. The main part of the, book provides a one/two page summary of each of the most common statistical tests,, complete with details of each test objective, the limitations (or assumptions) involved,, a brief outline of the method, a worked example and the numerical calculation. At the, start of the book there are more, detailed, worked examples of the nine most common, tests. The information provides an ideal “memory jog” for statisticians, practitioners, and other regular users of statistics who are competent statisticians but who need a, sourcebook for precise details of some or all the various tests., 100 Statistical Tests lists 100 different inferential tests used to solve a variety of, statistical problems. Each test is presented in an accurate, succinct format with a, suitable formula. The reader can follow an example using the numerical calculation provided (without the arithmetic steps), refer to the needed table and review the statistical, conclusion., After a first introduction to statistical testing the second section of the book provides, examples of the test procedures which are laid out clearly while the graphical display, of critical regions are presented in a standard way., The third section lists the objective of each of the tests described in the text. The next, section gives a useful classification of the tests presented by the type of the tests:, (a) for linear data: parametric classical tests, parametric tests, distribution free tests,, sequential tests and (b) for circular data: parametric tests. This invaluable table also, gives a concise summary of common statistical problem types and a list of tests which, may be appropriate. The problem types are classified by the number of samples (1, 2, or k samples), whether parametric or non-parametric tests are required, and the area of, interest (e.g. central tendency, distribution function, association)., The pages of the next section are devoted to the description of the 100 tests. Under, each test, the object, limitation and the method of testing are presented followed by an, example and the numerical calculation. The listings of limitations add to the comprehensive picture of each test. The descriptions of the methods are explained clearly. The, examples cited in the tests help the reader grasp a clear understanding of the methods, of testing., The first of the following two sections gives the list of tables while second section, displays 39 statistical tables many of which have accompanying diagrams illustrated in, a standard way. This comprehensive list covers all the commonly used standard tables., The book is concluded with references and index.

Page 11 :

INTRODUCTION TO STATISTICAL TESTING, Having collected together a number of tests, it is necessary to consider what can be, tested, and we include here some very general remarks about the general problem of, hypothesis testing. Students regard this topic as one full of pitfalls for the unwary,, and even teachers and experienced statisticians have been known to misinterpret the, conclusions of their analysis., Broadly speaking there are two basic concepts to grasp before commencing. First, the, tests are designed neither to prove nor to disprove hypotheses. We never set out to prove, anything; our aim is to show that an idea is untenable as it leads to an unsatisfactorily, small probability. Second, the hypothesis we are trying to disprove is always chosen to, be the one in which there is no change; for example, there is no difference between the, two population means, between the two samples, etc. This is why it is usually referred, to as the null hypothesis, H0 . If these concepts were firmly held in mind, we believe, that the subject of hypothesis testing would lose a lot of its mystique. (However, it is, only fair to point out that some hypotheses are not concerned with such matters.), To describe the process of hypothesis testing we feel that we cannot do better than, follow the five-step method introduced by Neave (1976a):, Step 1 Formulate the practical problem in terms of hypotheses. This can be difficult, in some cases. We should first concentrate on what is called the alternative hypothesis,, H1 , since this is the more important from the practical point of view. This should, express the range of situations that we wish the test to be able to diagnose. In this sense,, a positive test can indicate that we should take action of some kind. In fact, a better, name for the alternative hypothesis would be the action hypothesis. Once this is fixed, it should be obvious whether we carry out a one- or two-tailed test., The null hypothesis needs to be very simple and represents the status quo, i.e. there, is no difference between the processes being tested. It is basically a standard or control, with which the evidence pointing to the alternative can be compared., Step 2 Calculate a statistic (T ), a function purely of the data. All good test statistics, should have two properties: (a) they should tend to behave differently when H0 is, true from when H1 is true; and (b) their probability distribution should be calculable, under the assumption that H0 is true. It is also desirable that tables of this probability, distribution should exist., Step 3 Choose a critical region. We must be able to decide on the kind of values, of T which will most strongly point to H1 being true rather than H0 being true. Critical, regions can be of three types: right-sided, so that we reject H0 if the test statistic is, greater than or equal to some (right) critical value; left-sided, so that we reject H0 if, the test statistic is less than or equal to some (left) critical value; both-sided, so that, we reject H0 if the test statistic is either greater than or equal to the right critical value, or less than or equal to the left critical value. A value of T lying in a suitably defined, critical region will lead us to reject H0 in favour of H1 ; if T lies outside the critical, region we do not reject H0 . We should never conclude by accepting H0 ., Step 4 Decide the size of the critical region. This involves specifying how great, a risk we are prepared to run of coming to an incorrect conclusion. We define the, significance level or size of the test, which we denote by α, as the risk we are prepared, to take in rejecting H0 when it is in fact true. We refer to this as an error of the first

Page 12 :

INTRODUCTION TO STATISTICAL TESTING, , 3, , type or a Type I error. We usually set α to between 1 and 10 per cent, depending on the, severity of the consequences of making such an error., We also have to contend with the possibility of not rejecting H0 when it is in fact false, and H1 is true. This is an error of the second type or Type II error, and the probability, of this occurring is denoted by β., Thus in testing any statistical hypothesis, there are four possible situations which, determine whether our decision is correct or in error. These situations are illustrated as, follows:, Situation, , Conclusion, , H0 is not rejected, H0 is rejected, , H0 is true, , H0 is false, , Correct decision, , Type II error, , Type I error, , Correct decision, , Step 5 Many textbooks stop after step 4, but it is instructive to consider just where, in the critical region the calculated value of T lies. If it lies close to the boundary of, the critical region we may say that there is some evidence that H0 should be rejected,, whereas if it is at the other end of the region we would conclude there was considerable evidence. In other words, the actual significance level of T can provide useful, information beyond the fact that T lies in the critical region., In general, the statistical test provides information from which we can judge the, significance of the increase (or decrease) in any result. If our conclusion shows that the, increase is not significant then it will be necessary to confirm that the experiment had, a fair chance of establishing an increase had there been one present to establish., In order to do this we generally turn to the power function of the test, which is usually, computed before the experiment is performed, so that if it is insufficiently powerful, then the design can be changed. The power function is the probability of detecting a, genuine increase underlying the observed increase in the result, plotted as a function of, the genuine increase, and therefore the experimental design must be chosen so that the, probability of detecting the increase is high. Also the choice among several possible, designs should be made in favour of the experiment with the highest power. For a given, experiment testing a specific hypothesis, the power of the test is given by 1 − β., Having discussed the importance of the power function in statistical tests we would, now like to introduce the concept of robustness. The term ‘robust’ was first introduced, in 1953 to denote a statistical procedure which is insensitive to departures from the, assumptions underlying the model on which it is based. Such procedures are in common, use, and several studies of robustness have been carried out in the field of ‘analysis, of variance’. The assumptions usually associated with analysis of variance are that the, errors in the measurements (a) are normally distributed, (b) are statistically independent, and (c) have equal variances., Most of the parametric tests considered in this book have made the assumption that, the populations involved have normal distributions. Therefore a test should only be, carried out when the normality assumption is not violated. It is also a necessary part of

Page 13 :

4, , 100 STATISTICAL TESTS, , the test to check the effect of applying these tests when the assumption of normality is, violated., In parametric tests the probability distribution of the test statistic under the null, hypothesis can only be calculated by an additional assumption on the frequency distribution of the population. If this assumption is not true then the test loses its validity., However, in some cases the deviation of the assumption has only a minor influence on, the statistical test, indicating a robust procedure. A parametric test also offers greater, discrimination than the corresponding distribution-free test., For the non-parametric test no assumption has to be made regarding the frequency, distribution and therefore one can use estimates for the probability that any observation, is greater than a predetermined value., Neave (1976b) points out that it was the second constraint in step 2, namely that the, probability distribution of the test statistic should be calculable, which led to the growth, of the number of non-parametric tests. An inappropriate assumption of normality had, often to be built into the tests. In fact, when comparing two samples, we need only, look at the relative ranking of the sample members. In this way under H0 all the rank, sequences are equally likely to occur, and so it became possible to generate any required, significance level comparatively easily., Two simple tests based on this procedure are the Wald–Wolfowitz number of runs, test and the median test proposed by Mood, but these are both low in power. The, Kolmogorov–Smirnov test has higher power but is more difficult to execute. A test, which is extremely powerful and yet still comparatively easy to use is the Wilcoxon–, Mann–Whitney test. Many others are described in later pages of this book.

Page 16 :

EXAMPLES OF TEST PROCEDURES, , Test 7, , 7, , t-test for a population mean (variance unknown), , Hypotheses and, alternatives, , H0 : µ = µ0, H1 : µ � = µ0, 2. H0 : µ = µ0, H1 : µ > µ0, 1., , t=, , Test statistics, , x̄ − µ0, √, s/ n, , where, �, (x − x̄)2, 2, ., s =, n−1, If σ 2 is not known and the estimate s2 of σ 2 is, based on a small sample (i.e. n < 20) and a, normal population., , When used, , Critical region and, degrees of freedom, , 1., DF = n –1, , 0.025, , 0.025, , –tn –1; 0.025, , tn –1; 0.025, , 2., , 0.05, , tn –1; 0.05, , H0 : µ0 = 4.0, n = 9, x̄ = 3.1, s = 1.0, ∴ t = −2.7, , Data, , Conclusion, , t8; 0.025 = ±2.306 [see Table 2]., Reject H0 ., 2. t8; 0.05 = −1.860 (left-hand side) [see Table 2]., Reject H0 ., , 1.

Page 18 :

EXAMPLES OF TEST PROCEDURES, , Test 10, , 9, , Method of paired comparisons, , Hypotheses and, alternatives, , 1., , H0 : µd, H1 : µd, 2. H0 : µd, H1 : µd, t=, , Test statistics, , =0, �= 0, =0, >0, , d − µd, √, s/ n, , where di = xi − yi , the difference in the n paired, observations., When an experiment is arranged so that each, observation in one sample can be ‘paired’, with a value from the second sample and the, populations are normally distributed., , When used, , Critical region and, degrees of freedom, , 1., DF = n – 1, , 0.025, , –tn – 1; 0.025, , 0.025, , tn – 1; 0.025, , 2., , 0.05, , tn – 1; 0.05, , n1 = 16, d = 1.0, s = 1.0, ∴ t = 4.0, , Data, , Conclusion, , t15; 0.025 = ±2.131 [see Table 2]., Reject H0 ., 2. t15; 0.05 = 1.753 [see Table 2]., Raject H0 ., , 1.

Page 21 :

12, , 100 STATISTICAL TESTS, , Test 37 χ 2 -test for goodness of fit, Hypotheses and, alternatives, , Goodness of fit for Poisson distribution with, known mean λ, , Test statistics, , χ2 =, , � (Oi − Ei )2, Ei, , Oi is the ith observed frequency, i = 1 to k;, Ei is expected frequency,, where Ei must be >5., When used, , To compare observed frequencies against those, obtained under assumptions about the parent, populations., , Critical region and, degrees of freedom, , Using α = 0.05 [see Table 5], DF: variable, normally one less than the, number of frequency comparisons (k) in the, summation in the test statistic., , 0.05, , Data, , H0 : Distribution., Poisson with λ = 2., xi, , Oi, , Ei, , 0, 1, 2, 3, 4, ≥5, , 10, 27, 30, 19, 8, 6, , 13.5, 27.0, 27.0, 18.0, 9.0, 5.5, , ∴ χ 2 = 1.45, Conclusion, , v = 5., χ5;2 0.05 = 11.07 [see Table 5]., Do not reject H0 .

Page 22 :

EXAMPLES OF TEST PROCEDURES, , 13, , Test 44 χ 2 -test for independence, Hypotheses and, alternatives, , Contingency table, , Test statistics, , χ2 =, , When used, , Given a bivariate frequency table for, attributes with m and n levels., , Critical region and, degrees of freedom, , Using α = 0.05 [see Table 5], , � (Oi − Ei )2, [see Table 5], Ei, , DF = (n – 1) (m – 1), , 0.05, , χ 2ν ; 0.05, , Data, , Machine, I, Grade, , Oi, , Ei, , Oi, , Ei, , Total, , 3, 9, 8, , 4, 8, 8, , 7, 11, 12, , 6, 12, 12, , 10, 20, 20, , 20, , 20, , 30, , 30, , 50, , A, B, C, Total, , II, , χ 2 = 0.625, Conclusion, , χ2;2 0.05 = 5.99 [see Table 5]., Do not reject H0 . The grades are independent, of the machine.

Page 23 :

LIST OF TESTS, Test 1 To investigate the significance of the difference between an assumed, population mean and sample mean when the population variance is known., Test 2 To investigate the significance of the difference between the means, of two samples when the variances are known and equal., Test 3 To investigate the significance of the difference between the means, of two samples when the variances are known and unequal., Test 4 To investigate the significance of the difference between an assumed, proportion and an observed proportion., Test 5 To investigate the assumption that the proportions of elements from, two populations are equal, based on two samples, one from each population., Test 6 To investigate the significance of the difference between two counts., Test 7 To investigate the significance of the difference between an assumed, population mean and a sample mean when the population variance is unknown., Test 8 To investigate the significance of the difference between the means, of two populations when the population variances are unknown but equal., Test 9 To investigate the significance of the difference between the means, of two populations when the population variances are unknown and unequal., Test 10 To investigate the significance of the difference between two, population means when no assumption is made about the population variances., Test 11 To investigate the significance of the regression coefficient., Test 12 To investigate whether the difference between the sample correlation, coefficient and zero is statistically significant., Test 13 To investigate the significance of the difference between a correlation, coefficient and a specified value., Test 14 To investigate the significance of the difference between the, correlation coefficients for a pair of variables occurring from two different, populations., Test 15 To investigate the difference between a sample variance and an, assumed population variance., Test 16 To investigate the significance of the difference between two, population variances., Test 17 To investigate the difference between two population variances when, there is correlation between the pairs of observations., Test 18 To compare the results of two experiments, each of which yields, a multivariate result. In other words, we wish to know if the mean pattern, obtained from the first experiment agrees with the mean pattern obtained for, the second., Test 19 To investigate the origin of one series of values for random variates,, when one of two markedly different populations may have produced that, particular series., , 21, 23, 25, 26, 27, 28, 29, 31, 33, 35, 37, 39, 40, , 42, 44, 45, 46, , 48, , 50

Page 24 :

LIST OF TESTS, , Test 20 To investigate the significance of the difference between a frequency, distribution based on a given sample and a normal frequency distribution with, the same mean and the same variance., Test 21 To investigate the significance of the difference between a suspicious, extreme value and other values in the sample., Test 22 To test the null hypothesis that the K samples came from K, populations with the same mean., Test 23 To investigate the significance of the difference between two, correlated proportions., Test 24 To investigate the significance of the difference between population, variance and an assumed value., Test 25 To investigate the significance of the difference between two counted, results., Test 26 To investigate the significance of the difference between the overall, mean of K subpopulations and an assumed value for the population mean., Test 27 To investigate which particular set of mean values or linear, combination of mean values shows differences with the other mean values., Test 28 To investigate the significance of all possible differences between, population means when the sample sizes are unequal., Test 29 To investigate the significance of all possible differences between, population means when the sample sizes are equal., Test 30 To investigate the significance of the differences when several, treatments are compared with a control., Test 31 To investigate the significance of the differences between the, variances of samples drawn from normally distributed populations., Test 32 To investigate the significance of the differences between the, variances of normally distributed populations when the sample sizes are equal., Test 33 To investigate the significance of the difference between a frequency, distribution based on a given sample and a normal frequency distribution., Test 34 To investigate the significance of the difference between one rather, large variance and other variances., Test 35 To investigate the significance of the difference between an observed, distribution and specified population distribution., Test 36 To investigate the significance of the difference between two, population distributions, based on two sample distributions., Test 37 To investigate the significance of the differences between observed, frequencies and theoretically expected frequencies., Test 38 To investigate the significance of the differences between, counts., Test 39 To investigate the significance of the differences between observed, frequencies for two dichotomous distributions., Test 40 To investigate the significance of the differences between observed, frequencies for two dichotomous distributions when the sample sizes are large., , 15, , 51, 54, 55, 57, 59, 60, 61, 63, 65, 67, 69, 71, 73, 74, 75, 76, 78, 79, 81, 83, 85

Page 25 :

16, , 100 STATISTICAL TESTS, , Test 41 To investigate the significance of the differences between observed, frequency distributions with a dichotomous classification., Test 42 To investigate the significance of the differences between, distributions of alternative data., Test 43 To investigate the significance of the differences between two, distributions based on two samples spread over some classes., Test 44 To investigate the difference in frequency when classified by one, attribute after classification by a second attribute., Test 45 To investigate the significance of the difference between the, population median and a specified value., Test 46 To investigate the significance of the difference between the medians, of two distributions when the observations are paired., Test 47 To investigate the significance of the difference between a population, mean and a specified value., Test 48 To investigate the significance of the difference between the means, of two similarly shaped distributions., Test 49 To test if two random samples could have come from two, populations with the same frequency distribution., Test 50 To test if two random samples could have come from two, populations with the same frequency distribution., Test 51 To test if K random samples could have come from K populations, with the same frequency distribution., Test 52 To test if two random samples could have come from two, populations with the same means., Test 53 To test if two random samples could have come from two, populations with the same variance., Test 54 To test if K random samples could have come from K populations, with the same mean., Test 55 To test if K random samples came from populations with the same, mean., Test 56 To investigate the difference between the largest mean and K − 1, other population means., Test 57 To test the null hypothesis that all treatments have the same effect, as the control treatment., Test 58 To investigate the significance of the correlation between two series, of observations obtained in pairs., Test 59 To investigate the significance of the correlation between two series, of observations obtained in pairs., Test 60 To test the null hypothesis that the mean µ of a population with, known variance has the value µ0 rather than the value µ1 ., Test 61 To test the null hypothesis that the standard deviation σ of a, population with a known mean has the value σ0 rather than the value σ1 ., , 86, 88, 89, 91, 93, 94, 95, 96, 97, 98, 99, 101, 102, 104, 106, 107, 108, 109, 110, 112, 114

Page 26 :

LIST OF TESTS, , Test 62 To test the null hypothesis that the parameter of a population has the, value p0 rather than p1 ., Test 63 To test the null hypothesis that the fluctuations in a series have a, random nature., Test 64 To test the null hypothesis that the fluctuations in a series have a, random nature. Series could be serially correlated., Test 65 To test the null hypothesis that the variations in a series are, independent of the order of the observations., Test 66 To test the null hypothesis that the fluctuations of a sample are, independent of the order in the sequence., Test 67 To test the null hypothesis that observations in a sample are, independent of the order in the sequence., Test 68 To test the null hypothesis that two samples have been randomly, selected from the same population., Test 69 To test the significance of the order of the observations in a sample., Test 70 To test the random occurrence of plus and minus signs in a sequence, of observations., Test 71 To test that the fluctuations in a sample have a random nature., Test 72 To compare the significance of the differences in response for K, treatments applied to n subjects., Test 73 To investigate the significance of the differences in response for K, treatments applied to n subjects., Test 74 To investigate the significance of the correlation between n series of, rank numbers, assigned by n numbers of a committee to K subjects., Test 75 To test a model for the distribution of a random variable of the, continuous type., Test 76 To test the equality of h independent multinomial distributions., Test 77 To test for non-additivity in a two-way classification., Test 78 To test the various effects for a two-way classification with an equal, number of observations per cell., Test 79 To test the main effects in the case of a two-way classification with, unequal numbers of observations per cell., Test 80 To test for nestedness in the case of a nested or hierarchical, classification., Test 81 To test the presence of regression of variable Y on the observed, value X., Test 82 To test the linearity of regression between the X variable and the Y, variable., Test 83 To test the significance of the reduction of uncertainty of past events., Test 84 To test the significance of the difference in sequential connections, across groups., , 17, , 116, 118, 120, 121, 122, 123, 124, 126, 128, 129, 130, 131, 133, 135, 137, 139, 142, 145, 148, 151, 153, 155, 156

Page 27 :

18, , 100 STATISTICAL TESTS, , Test 85 To test whether the population value of each regression coefficient, is zero in a multiple regression model., Test 86 To test the variances in a balanced random effects model of random, variables., Test 87 To test the interaction effects in a two-way classification random, effects model with equal number of observations per cell., Test 88 To test a parameter of a rectangular population using the likelihood, ratio method., Test 89 To test a parameter of an exponential population using the uniformly, most powerful test method., Test 90 To test the parameter of a Bernoulli population using the sequential, test method., Test 91 To test the ratio between the mean and the standard deviation of a, normal population where both are unknown, using the sequential method., Test 92 To test whether the error terms in a regression model are autocorrelated., Test 93 To test the medians of two populations., Test 94 To test whether a proposed distribution is a suitable probabilistic, model for the sample data., Test 95 To test whether the observed angles have a tendency to cluster around, a given angle, indicating a lack of randomness in the distribution., Test 96 To test whether the given distribution fits a random sample of angular, values., Test 97 To test whether two samples from circular observations differ, significantly from each other with respect to mean direction or angular, variance., Test 98 To test whether the mean angles of two independent circular, observations differ significantly from each other., Test 99 To test whether two independent random samples from circular, observations differ significantly from each other with respect to mean angle,, angular variance or both., Test 100 To test whether the treatment effects of independent samples from, von Mises populations differ significantly from each other., , 158, 160, 161, 164, 165, 166, 168, 169, 171, 172, 174, 176, , 177, 178, , 180, 182

Page 28 :

CLASSIFICATION OF TESTS, , Test numbers, For linear data, , 1 sample, , 2 samples, , K samples, , Parametric classical tests, for central tendency, , 1, 7, 19, , 2, 3, 8, 9, 10, 18, , for proportion, for variability, for distribution functions, for association, for probability, , 4, 15, 21, 24, 34, 20, 33, 75, 88, 89, 94, 11, 12, 13, 81, 82, 83, , 5, 6, 25, 16, 17, –, 14, 23, 84, 92, –, , 22, 26, 27, 28, 29, 30,, 77, 78, 79, 80, 87, –, 31, 32, 86, 76, 85, –, , Parametric tests, for distribution function, , 35, 37, , 36, 39, 40, , 38, 41, 42, 43, 44, , 45, 47, –, –, 58, 59, 63, 64, 65, 66, 67,, 69, 70, 71, , 46, 48, 50, 52, 93, 53, 49, –, 68, , 51, 54, 55, 56, 57, –, –, 72, 73, 74, –, , 60, 90, 61, 62, 91, , –, –, –, –, , –, –, –, –, , Distribution-free tests, for central tendency, for variability, for distribution functions, for association, for randomness, , Sequential tests, central tendency, variability, for proportion, for ratio, , Test numbers, For circular data, , 1 sample, , 2 samples, , K samples, , Parametric tests, for randomness, for distribution function, for central tendency, for variability, , 95, 96, –, –, , –, –, 97, 98, 99, , –, –, –, 100

Page 30 :

THE TESTS, Test 1 Z-test for a population mean (variance known), Object, To investigate the significance of the difference between an assumed population mean, µ0 and a sample mean x̄., Limitations, 1. It is necessary that the population variance σ 2 is known. (If σ 2 is not known, see the, t-test for a population mean (Test 7).), 2. The test is accurate if the population is normally distributed. If the population is not, normal, the test will still give an approximate guide., Method, From a population with assumed mean µ0 and known variance σ 2 , a random sample, of size n is taken and the sample mean x̄ calculated. The test statistic, Z=, , x̄ − µ0, √, σ/ n, , may be compared with the standard normal distribution using either a one- or two-tailed, test, with critical region of size α., Example, For a particular range of cosmetics a filling process is set to fill tubs of face powder, with 4 gm on average and standard deviation 1 gm. A quality inspector takes a random, sample of nine tubs and weighs the powder in each. The average weight of powder is, 4.6 gm. What can be said about the filling process?, A two-tailed test is used if we are concerned about over- and under-filling., In this Z = 1.8 and our acceptance range is −1.96 < Z < 1.96, so we do not reject, the null hypothesis. That is, there is no reason to suggest, for this sample, that the filling, process is not running on target., On the other hand if we are only concerned about over-filling of the cosmetic then, a one-tailed test is appropriate. The acceptance region is now Z < 1.645. Notice that, we have fixed our probability, which determines our acceptance or rejection of the null, hypothesis, at 0.05 (or 10 per cent) whether the test is one- or two-tailed. So now we, reject the null hypothesis and can reasonably suspect that we are over-filling the tubs, with cosmetic., Quality control inspectors would normally take regular small samples to detect the, departure of a process from its target, but the basis of this process is essentially that, suggested above.

Page 32 :

THE TESTS, , 23, , Test 2 Z-test for two population means (variances, known and equal), Object, To investigate the significance of the difference between the means of two populations., Limitations, 1. Both populations must have equal variances and this variance σ 2 must be known., (If σ 2 is not known, see the t-test for two population means (Test 8).), 2. The test is accurate if the populations are normally distributed. If not normal, the, test may be regarded as approximate., Method, Consider two populations with means µ1 and µ2 . Independent random samples of size, n1 and n2 are taken which give sample means x̄1 and x̄2 . The test statistic, Z=, , (x̄1 − x̄2 ) − (µ1 − µ2 ), �, �1, 1, 1 2, σ, +, n1, n2, , may be compared with the standard normal distribution using either a one- or two-tailed, test., Example, Two teams of financial sales persons are compared to see if it is likely that the, instruction each has received could have led to differing success rates. A sample of, nine transactions (which involves the whole team) yields an average success rate of, 1.2. Similarly a sample of 16 transactions for the second team yields a success rate, of 1.7. The variances for both teams are equal to 2.0750 (standard deviation 1.4405)., The success rate is calculated using a range of output measures for a transaction., If we are only interested to know of a difference between the two teams then a, two-tailed test is appropriate. In this case we accept the null hypothesis and can, assume that both teams are equally successful. This is because our acceptance region, is −1.96 < Z < 1.96 and we have computed a Z value, for this sample, of −0.833., On the other hand, if we suspect that the first team had received better training than, the second team we would use a one-tailed test., For our example, here, this is certainly not the case since our Z value is negative., Our acceptance region is Z < 1.645. Since the performance is in the wrong direction, we don’t even need to perform a calculation. Notice that we are not doing all possible, combination of tests so that we can find a significant result. Our test is based on our, design of the ‘experiment’ or survey planned before we collect any data. Our data do, not have a bearing on the form of the testing.

Page 34 :

THE TESTS, , 25, , Test 3 Z-test for two population means (variances, known and unequal), Object, To investigate the significance of the difference between the means of two populations., Limitations, 1. It is necessary that the two population variances be known. (If they are not known,, see the t-test for two population means (Test 9).), 2. The test is accurate if the populations are normally distributed. If not normal, the, test may be regarded as approximate., Method, Consider two populations with means µ1 and µ2 and variances σ12 and σ22 . Independent, random samples of size n1 and n2 are taken and sample means x̄1 and x̄2 are calculated., The test statistic, Z=, , (x̄1 − x̄2 ) − (µ1 − µ2 ), �, �1, σ22 2, σ12, +, n1, n2, , may be compared with the standard normal distribution using either a one- or two-tailed, test., Example, Brand A of a jumbo-sized pack of potato crisp is known to have a more variable weight, than brand B of potato crisp. Population variances are 0.000576 gm2 and 0.001089 gm2 ,, respectively. The respective means for samples of size 13 and 8 are 80.02 gm and, 79.98 gm., Is there a difference between the two brands in terms of the weights of the jumbo, packs? We do not have any pre-conceived notion of which brand might be ‘heavier’ so, we use a two-tailed test. Our acceptance region is −1.96 < Z < 1.96 and our calculated, Z value of 2.98. We therefore reject our null hypothesis and can conclude that there is, a difference with brand B yielding a heavier pack of crisps., Numerical calculation, n1 = 13, n2 = 8, x̄1 = 80.02, x̄2 = 79.98, σ12 = 0.000576, σ22 = 0.001089, Z = 2.98, Critical value Z0.05 = 1.96 [Table 1]., Reject the null hypothesis of no difference between means.

Page 35 :

26, , 100 STATISTICAL TESTS, , Test 4 Z-test for a proportion (binomial distribution), Object, To investigate the significance of the difference between an assumed proportion p0 and, an observed proportion p., Limitations, The test is approximate and assumes that the number of observations in the sample is, sufficiently large (i.e. n � 30) to justify the normal approximation to the binomial., Method, A random sample of n elements is taken from a population in which it is assumed that, a proportion p0 belongs to a specified class. The proportion p of elements in the sample, belonging to this class is calculated. The test statistic is, |p − p0 | − 1/2n, Z= �, �1 ., p0 (1 − p0 ) 2, n, This may be compared with a standard normal distribution using either a one- or twotailed test., Example, The pass rate for a national statistics test has been 0.5, or 50 per cent for some years. A, random sample of 100 papers from independent (or non-college based) students yields, a pass rate of 40 per cent. Does this show a significant difference? Our computed Z is, −2.0 and our acceptance region is −1.96 < Z < 1.96. So we reject the null hypothesis, and conclude that there is a difference in pass rates. In this case, the independent, students fare worse than those attending college. While we might have expected this,, there are other possible factors that could point to either an increase or decrease in, the pass rate. Our two-tailed test affirms our ignorance of the possible direction of a, difference, if one exists., Numerical calculation, n = 100, p = 0.4, p0 = 0.5, Z = −2.1, Critical value Z0.05 = ±1.96 [Table 1]., Reject the null hypothesis of no difference in proportions.

Page 36 :

THE TESTS, , 27, , Test 5 Z-test for the equality of two proportions, (binomial distribution), Object, To investigate the assumption that the proportions π1 and π2 of elements from two, populations are equal, based on two samples, one from each population., Limitations, The test is approximate and assumes that the number of observations in the two samples is sufficiently large (i.e. n1 , n2 � 30) to justify the normal approximation to the, binomial., Method, It is assumed that the populations have proportions π1 and π2 with the same characteristic. Random samples of size n1 and n2 are taken and respective proportions p1 and p2, calculated. The test statistic is, (p1 − p2 ), �, �� 1, 2, 1, 1, +, P(1 − P), n1, n2, , Z=�, , where, P=, , p1 n1 + p2 n2, ., n1 + n2, , Under the null hypothesis that π1 = π2 , Z is approximately distributed as a standard, normal deviate and the resulting test may be either one- or two-tailed., Example, Two random samples are taken from two populations, which are two makes of clock, mechanism produced in different factories. The first sample of size 952 yielded the, proportion of clock mechanisms, giving accuracy not within fixed acceptable limits, over a period of time, to be 0.325 per cent. The second sample of size 1168 yielded, 5.73 per cent. What can be said about the two populations of clock mechanisms, are, they significantly different? Again, we do not have any pre-conceived notion of whether, one mechanism is better than the other, so a two-tailed test is employed., With a Z value of −6.93 and an acceptance region of −1.96 < Z < 1.96, we, reject the null hypothesis and conclude that there is significant difference between the, mechanisms in terms of accuracy. The second mechanism is significantly less accurate, than the first., Numerical calculation, n1 = 952, n2 = 1168, p1 = 0.00325, p2 = 0.0573, Z = −6.93, Critical value Z0.05 = ±1.96 [Table 1]., Reject the null hypothesis.

Page 37 :

28, , 100 STATISTICAL TESTS, , Test 6 Z-test for comparing two counts (Poisson, distribution), Object, To investigate the significance of the difference between two counts., Limitations, The test is approximate and assumes that the counts are large enough for the normal, approximation to the Poisson to apply., Method, Let n1 and n2 be the two counts taken over times t1 and t2 , respectively. Then the two, average frequencies are R1 = n1 /t1 and R2 = n2 /t2 . To test the assumption of equal, average frequencies we use the test statistic, Z=�, , (R1 − R2 ), �1 ., R2 2, R1, +, t1, t2, , This may be compared with a standard normal distribution using either a one-tailed or, two-tailed test., Example, Two traffic roundabouts are compared for intensity of traffic at non-peak times with, steady conditions. Roundabout one has 952/2 arrivals over 11 minutes and roundabout, two has 1168/2 arrivals over 15 minutes. The arrival rates, per minute, are therefore, 476/11 (43.27) and 584/15 (38.93) respectively., What do these results say about the two arrival rates or frequency taken over the two, time intervals? We calculate a Z value of 2.4 and have an acceptance region of −1.96 <, Z < 1.96. So we reject the null hypothesis of no difference between the two rates., Roundabout one has an intensity of arrival significantly higher than roundabout two., Numerical calculation, n1 = 952, n2 = 1168, R1 =, , n1, n2, = 43.27, R2 =, = 38.93, t1, t2, , t1 = 22, t2 = 30, Z=�, , (R1 − R2 ), 4.34, 4.34, = 2.40, =, 1, �1 =, 1.81, (3.26) 2, R1, R2 2, +, t1, t2, , Critical value Z0.05 = 1.96 [Table 1]., Reject the null hypothesis of no difference between the counts.

Page 38 :

THE TESTS, , 29, , Test 7 t-test for a population mean (variance, unknown), Object, To investigate the significance of the difference between an assumed population mean, µ0 and a sample mean x̄., Limitations, 1. If the variance of the population σ 2 is known, a more powerful test is available: the, Z-test for a population mean (Test 1)., 2. The test is accurate if the population is normally distributed. If the population is not, normal, the test will give an approximate guide., Method, From a population with assumed mean µ0 and unknown variance, a random sample, of size n is taken and the sample mean x̄ calculated as well as the sample standard, deviation using the formula, , s=, , ⎫ 21, ⎧�, n, ⎪, ⎪, (x, −, x̄), ⎪, ⎪, i, ⎬, ⎨, i=1, , ⎪, ⎪, ⎩, , n−1, , ⎪, ⎪, ⎭, , ., , The test statistic is, t=, , x̄ − µ0, √, s/ n, , which may be compared with Student’s t-distribution with n − 1 degrees of freedom., The test may be either one-tailed or two-tailed., Example, A sample of nine plastic nuts yielded an average diameter of 3.1 cm with estimated, standard deviation of 1.0 cm. It is assumed from design and manufacturing requirements, that the population mean of nuts is 4.0 cm. What does this say about the mean diameter of, plastic nuts being produced? Since we are concerned about both under- and over-sized, nuts (for different reasons) a two-tailed test is appropriate., Our computed t value is −2.7 and acceptance region −2.3 < t < 2.3. We reject, the null hypothesis and accept the alternative hypothesis of a difference between the, sample and population means. There is a significant difference (a drop in fact) in the, mean diameters of plastic nuts (i.e. between the sample and population).

Page 40 :

THE TESTS, , 31, , Test 8 t-test for two population means (variances, unknown but equal), Object, To investigate the significance of the difference between the means of two populations., Limitations, 1. If the variance of the populations is known, a more powerful test is available: the, Z-test for two population means (Test 2)., 2. The test is accurate if the populations are normally distributed. If the populations, are not normal, the test will give an approximate guide., Method, Consider two populations with means µ1 and µ2 . Independent random samples of size, n1 and n2 are taken from which sample means x̄1 and x̄2 together with sums of squares, s12 =, , n1, �, (xi − x̄1 )2, i=1, , and, s22 =, , n2, �, (xi − x̄2 )2, i=1, , are calculated. The best estimate of the population variance is found as s2 =, [(n1 − 1)s12 + (n2 − 1)s22 ]/(n1 + n2 − 2). The test statistic is, t=, , (x̄1 − x̄2 ) − (µ1 − µ2 ), �, �1, 1, 1 2, s, +, n1, n2, , which may be compared with Student’s t-distribution with n1 + n2 − 2 degrees of, freedom. The test may be either one-tailed or two-tailed., Example, Two snack foods are made and sold in 30 gm packets. Random samples of size 12 are, taken from the production line of each snack food and means and variances obtained, viz.: mean1 31.75 gm, variance1 112.25 gm2 ; mean2 28.67 gm, variance2 66.64 gm2 ., What can be said about the two production processes in relation to the weight of packets?, We use a two-tailed test and find that t is 0.798. Our acceptance region is −2.07 <, t < 2.07 and so we accept our null hypothesis. So we can conclude that the mean, weight of packs from the two production lines is the same.

Page 42 :

THE TESTS, , 33, , Test 9 t-test for two population means (variances, unknown and unequal), Object, To investigate the significance of the difference between the means of two populations., Limitations, 1. If the variances of the populations are known, a more powerful test is available: the, Z-test for two population means (Test 3)., 2. The test is approximate if the populations are normally distributed or if the sample, sizes are sufficiently large., 3. The test should only be used to test the hypothesis µ1 = µ2 ., Method, Consider two populations with means µ1 and µ2 . Independent random samples of size, n1 and n2 are taken from which sample means x̄1 and x̄2 and variances, n1, �, (xi − x̄1 )2, , s12 =, , i=1, , n1 − 1, , n2, �, (xi − x̄2 )2, , and, , s22 =, , i=1, , n2 − 1, , are calculated. The test statistic is, t=, , (x̄1 − x̄2 ) − (µ1 − µ2 ), �, �1, s22 2, s12, +, n1, n2, , which may be compared with Student’s t-distribution with degrees of freedom given, by, ⎫, ⎧, �, �, 2, 2 2, ⎪, ⎪, s, s, ⎪, ⎪, 1, ⎪, ⎪, ⎪, ⎪, + 2, ⎪, ⎪, ⎬, ⎨, n1, n2, ., ν=, ⎪, ⎪, s14, s24, ⎪, ⎪, ⎪, ⎪, +, ⎪, ⎪ 2, ⎪, ⎭, ⎩ n (n1 − 1) n2 (n2 − 1) ⎪, 1, 2, Example, Two financial organizations are about to merge and, as part of the rationalization, process, some consideration is to be made of service duplication. Two sales teams, responsible for essentially identical products are compared by selecting samples, from each and reviewing their respective profit contribution levels per employee over, a period of two weeks. These are found to be 3166.00 and 2240.40 with estimated

Page 43 :

34, , 100 STATISTICAL TESTS, , variance of 6328.27 and 221 661.3 respectively. How do the two teams compare on, performance?, We compute a t value of 5.72. Our acceptance region is −2.26 < t < 2.26 so we, reject the null hypothesis and accept the alternative. There is a significant difference, between the two teams. Team 1 is more productive than team 2., Numerical calculation, n1 = 4, n2 = 9, x̄1 = 3166.0, x̄2 = 2240.4, s12 = 6328.67, s22 = 221 661.3, t = 5.72, ν = 9 (rounded), Critical value t9; 0.025 = 2.26 [Table 2]., Reject the null hypothesis.

Page 44 :

THE TESTS, , 35, , Test 10 t-test for two population means (method of, paired comparisons), Object, To investigate the significance of the difference between two population means, µ1 and, µ2 . No assumption is made about the population variances., Limitations, 1. The observations for the two samples must be obtained in pairs. Apart from population differences, the observations in each pair should be carried out under identical,, or almost identical, conditions., 2. The test is accurate if the populations are normally distributed. If not normal, the, test may be regarded as approximate., Method, The differences di are formed for each pair of observations. If there are n such pairs of, observations, we can calculate the variance of the differences by, s =, 2, , n, �, (di − d̄)2, i=1, , n−1, , Let the means of the samples from the two populations be denoted by x̄1 and x̄2 . Then, the test statistic becomes, t=, , (x̄1 − x̄2 ) − 0, 1, , s/n 2, , which follows Student’s t-distribution with n − 1 degrees of freedom. The test may be, either one-tailed or two-tailed., Example, To compare the efficacy of two treatments for a respiratory condition, ten patients, are selected at random and the treatments are administered using an oral spray. The, patients then perform a treadmill exercise until a maximum exercise rate is reached. The, times for these are compared. A suitable period of time is ensured between treatments, to prevent the effect of treatments to interact. Do the two treatments differ? In this, case we do not expect one particular treatment to be superior to the other so a twotailed test is used. We compute a t value of −0.11 and have an acceptance region of, −2.26 < t < 2.26. So we accept the null hypothesis of no difference between the two, treatments. However, in such situations it is often the case that an improvement over an, existing or original treatment is expected. Then a one-tailed test would be appropriate.

Page 45 :

36, , 100 STATISTICAL TESTS, , Numerical calculation, d̄ = x̄1 − x̄2 = −0.1, n = 10, ν = n − 1, s = 2.9, t = −0.11, ν = 9, Critical value t9; 0.025 = 2.26 [Table 2]., Do not reject the null hypothesis of no difference between means.

Page 46 :

THE TESTS, , 37, , Test 11 t-test of a regression coefficient, Object, To investigate the significance of the regression coefficient of y on x., Limitations, The usual assumptions for regression should apply, namely:, 1. the variable y follows a normal distribution for each value of x;, 2. the variance among the y values remains constant for any given values of x., Method, In order to estimate a linear regression of the form y = A + B(x − x̄), a sample of n, pairs of points (xi , yi ) is required. B is called the regression coefficient, and to test the, null hypothesis that this is equal to zero we first calculate the sample estimate, 1� �, xi, yi, xi yi −, n, ., �, �, �, 1 � 2, xi, xi2 −, n, , �, b=, , The variance of the xs and the variance of the ys about the regression line are calculated, as follows:, �, �, {yi − ȳ − b(xi − x̄)}2, (xi − x̄)2, 2, =, and sy·x, sx2 =, n−2, n−1, where x̄ and ȳ are the means of the xs and ys, respectively. The test statistic becomes, t=, , 1, bsx, (n − 1)− 2, sy·x, , which follows Student’s t-distribution with n − 2 degrees of freedom. The test must be, two-tailed since b may be positive or negative. However, the test may be one-tailed if, the alternative hypothesis is directional., Example, In an investigation of the relationship between a reaction test, on a vehicle simulator, and, a composite test a sample of 12 male subjects is selected. The composite test of reactions, is a much cheaper alternative to a vehicle simulator test. A regression relationship is, computed with regression coefficient 5.029 and t value 6.86. The acceptance region for, the null hypothesis is −2.23 < t < 2.23. Since the computed t value lies outside the, acceptance region we conclude that slope (b coefficient) is significantly greater than, zero and a significant regression exists., Notice that the test does not tell us how good a predictor x is of y, only that the, regression is significant.

Page 47 :

38, , 100 STATISTICAL TESTS, , Numerical calculation, �, �, �, xi = 766,, yi = 1700,, xi2 = 49 068,, �, �, yi2 = 246 100,, xi yi = 109 380, n = 12, x̄ = 68.83, ȳ = 141.67, ν = n − 2, sx2 = 15.61, sy2 = 478.8, b = 5.029, 2, sy·x, = 92.4, , t = 6.86, ν = 10, Critical value t10; 0.025 = ±2.23 [Table 2]., Reject the null hypothesis.

Page 48 :

THE TESTS, , 39, , Test 12 t-test of a correlation coefficient, Object, To investigate whether the difference between the sample correlation coefficient and, zero is statistically significant., Limitations, It is assumed that the x and y values originate from a bivariate normal distribution, and, that the relationship is linear. To test an assumed value of the population coefficient, other than zero, refer to the Z-test for a correlation coefficient (Test 13)., Method, Given a sample of n points (xi , yi ) the correlation coefficient r is calculated from the, formula, �, (xi − x̄)(yi − ȳ), r=�, �1 ., �, �, 2, 2 2, (yi − ȳ), (xi − x̄), To test the null hypothesis that the population value of r is zero, the test statistic, √, r, · n−2, t=√, 1 − r2, is calculated and this follows Student’s t-distribution with n − 2 degrees of freedom., The test will normally be two-tailed but in certain cases could be one-tailed., Example, In a study of the possible relationship between advertising on television and product, preferences a panel of television viewers is selected. For two brands of toothpaste, one, supermarket own brand and one popular brand, panel members were asked to score, (on a scale from 1 to 20) their preference for each product. The correlation coefficient, between brands was 0.32, which is modest but is it significantly greater than zero? The, calculated t value is 1.35. Our acceptance region is −1.35 < t < 1.35 so we accept the, null hypothesis. So there is no association between the brands compared, which would, suggest a clear preference for popular brand or own brand. Consumers are less likely to, substitute own brand for popular brand when preferences appear not to be associated., Numerical calculation, n = 18, r = 0.32, ν = n − 2, √, √, 0.32 16, r n−2, =�, = 1.35, t= √, 1 − r2, 1 − (0.32)2, Critical value t16; 0.05 = 1.75 [Table 2]., Do not reject the null hypothesis. NB: In this case the x and y variables are independent.

Page 49 :

40, , 100 STATISTICAL TESTS, , Test 13 Z-test of a correlation coefficient, Object, To investigate the significance of the difference between a correlation coefficient and a, specified value ρ0 ., Limitations, 1. The x and y values originate from normal distributions., 2. The variance in the y values is independent of the x values., 3. The relationship is linear., When these conditions cannot be met, the user should turn to the Kendall rank, correlation test (Test 59)., Method, With r as defined in the t-test of a correlation coefficient (Test 12), using the Fisher, Z-transformation we have, �, �, �, �, 1+r, 1+r, 1, Z1 = loge, ., = 1.1513 log10, 1−r, 1−r, 2, The distribution of Z1 is approximately normal with mean µZ1 and standard deviation, σZ1 where, �, �, �, �, 1 + ρ0, 1+r, 1, µZ1 = loge, = 1.1513 log10, 2, 1 − ρ0, 1−r, 1, σZ1 = √, ., n−3, The test statistic is now, Z=, , Z1 − µZ1, ., σZ1, , Example, A market research company has assumed from previous research that the correlation, between two brands in terms of consumer preference is 0.50. This value has a bearing on, stocking levels in supermarkets since one brand will often substitute for another when, the number on the shelves of one product runs out. A panel of 24 consumers produces a, correlation on preference scores (based on a scale of 1 to 20) for the two brands of 0.75., Can we say that the correlation coefficient is at least 0.50? The Fisher Z-transformation, value, calculated as 0.973, yields a test statistic of 1.94. The acceptance region is, Z < 1.64. Since the calculated value is greater than the critical value we reject the null, hypothesis. This means that the correlation coefficient is at least 0.50 and there is no, need to re-evaluate supermarket stocking policy for these two products.

Page 50 :

THE TESTS, , 41, , Numerical calculation, r = 0.75, ρ0 = 0.50, n = 24, , �, , µZ1 = 1.1513 log10 3 = 0.5493, Z1 = 1.1513 log10, = 0.9730 [Table 4], σZ1 = 0.2182, Z=, , Z1 − µZ1, = 1.94., σZ1, , The critical value at α = 0.10 is 1.64 [Table 1]., The calculated value is greater than the critical value., Reject the null hypothesis of no difference., , 1 + 0.75, 1 − 0.75, , �

Page 51 :

42, , 100 STATISTICAL TESTS, , Test 14 Z-test for two correlation coefficients, Object, To investigate the significance of the difference between the correlation coefficients for, a pair of variables occurring from two different samples and the difference between, two specified values ρ1 and ρ2 ., Limitations, 1. The x and y values originate from normal distributions., 2. The variance in the y values is independent of the x values., 3. The relationships are linear., Method, Using the notation of the Z-test of a correlation coefficient, we form for the first sample, �, �, �, �, 1, 1 + r1, 1 + r1, = 1.1513 log10, Z1 = loge, 2, 1 − r1, 1 − r1, √, which has mean µZ1 = 21 loge [(1 + ρ1 )/(1 − ρ1 )] and variance σZ1 = 1/ n1 − 3,, where n1 is the size of the first sample; Z2 is determined in a similar manner. The test, statistic is now, Z=, , (Z1 − Z2 ) − (µZ1 − µZ2 ), σ, , 1, , where σ = (σZ21 + σZ22 ) 2 . Z is normally distributed with mean 0 and with variance 1., Example, A market research company is keen to categorize a variety of brands of potato crisp based, on the correlation coefficients of consumer preferences. The market research company, has found that if consumers’ preferences for brands are similar then marketing programmes can be merged. Two brands of potato crisp are compared for two advertising, regions. Panels are selected of sizes 28 and 35 for the two regions and correlation coefficients for brand preferences are 0.50 and 0.30 respectively. Are the two associations, statistically different or can marketing programmes be merged? The calculated Z value, is 0.8985 and the acceptance region for the null hypothesis is −1.96 < Z < 1.96., So we accept the null hypothesis and conclude that we can go ahead and merge the, marketing programmes. This, of course, assumes that the correlation coefficient is a, good measure to use for grouping market research programmes., Numerical calculation, n1 = 28, n2 = 35, r1 = 0.50, r2 = 0.30, α = 0.05, �, �, 1 + r1, Z1 = 1.1513 log10, = 0.5493 [Table 4], 1 − r1

Page 52 :

THE TESTS, , �, 1 + r2, = 0.3095 [Table 4], 1 − r2, �1, �, 2, 1, 1, +, = 0.2669, σ =, n1 − 3 n2 − 3, 0.5493 − 0.3095, Z=, = 0.8985, 0.2669, The critical value at α = 0.05 is 1.96 [Table 1]., Do not reject the null hypothesis., �, , Z2 = 1.1513 log10, , 43

Page 53 :

44, , 100 STATISTICAL TESTS, , Test 15 χ 2 -test for a population variance, Object, To investigate the difference between a sample variance s2 and an assumed population, variance σ02 ., Limitations, It is assumed that the population from which the sample is drawn follows a normal, distribution., Method, Given a sample of n values x1 , x2 , . . . , xn , the values of, �, �, (xi − x̄)2, xi, 2, and s =, x̄ =, n, n−1, are calculated. To test the null hypothesis that the population variance is equal to σ02 the, test statistic (n − 1)s2 /σ02 will follow a χ 2 -distributkm with n − 1 degrees of freedom., The test may be either one-tailed or two-tailed., Example, A manufacturing process produces a fixed fluid injection into micro-hydraulic systems., The variability of the volume of injected fluid is critical and is set at 9 sq ml. A sample, of 25 hydraulic systems yields a sample variance of 12 sq ml. Has the variability of, the volume of fluid injected changed? The calculated chi-squared value is 32.0 and, the 5 per cent critical value is 36.42. So we do not reject the null hypothesis of no, difference. This means that we can still consider the variability to be set as required., Numerical calculation, x̄ = 70, σ02 = 9, n = 25, s2 = 12, ν = 24, 24 × 12, = 32.0, 9, = 36.42 [Table 5]., , χ 2 = (n − 1)s2 /σ02 =, 2, Critical value x24;, 0.05, , Do not reject the null hypothesis. The difference between the variances is not significant.

Page 54 :

THE TESTS, , 45, , Test 16 F -test for two population variances (variance, ratio test), Object, To investigate the significance of the difference between two population variances., Limitations, The two populations should both follow normal distributions. (It is not necessary that, they should have the same means.), Method, Given samples of size n1 with values x1 , x2 , . . . , xn1 and size n2 with values, y1 , y2 , . . . , yn2 from the two populations, the values of, �, �, xi, yi, x̄ =, , ȳ =, n1, n2, and, , �, s12 =, , (xi − x̄)2, , n1 − 1, , �, ,, , s22 =, , (yi − ȳ)2, , n2 − 1, , can be calculated. Under the null hypothesis that the variances of the two populations, are equal the test statistic F = s12 /s22 follows the F-distribution with (n1 − 1, n2 − 1), degrees of freedom. The test may be either one-tailed or two-tailed., Example, Two production lines for the manufacture of springs are compared. It is important that, the variances of the compression resistance (in standard units) for the two production, lines are the same. Two samples are taken, one from each production line and variances, are calculated. What can be said about the two population variances from which the, two samples have been taken? Is it likely that they differ? The variance ratio statistic F, is calculated as the ratio of the two variances and yields a value of 0.36/0.087 = 4.14., The 5 per cent critical value for F is 5.41. We do not reject our null hypothesis of, no difference between the two population variances. There is no significant difference, between population variances., Numerical calculation, �, �, x = 0.4,, x 2 = 0.30, s12 = 0.087, n1 = 4, n2 = 6,, �, �, y = 0.06,, y2 = 1.78, s22 = 0.36, 0.36, = 4.14, 0.087, Critical value F3.5; 0.05 = 5.41 [Table 3]., Do not reject the null hypothesis. The two population variances are not significantly, different from each other., F3; 5 =

Page 55 :

46, , 100 STATISTICAL TESTS, , Test 17 F -test for two population variances (with, correlated observations), Object, To investigate the difference between two population variances when there is correlation, between the pairs of observations., Limitations, It is assumed that the observations have been performed in pairs and that correlation, exists between the paired observations. The populations are normally distributed., Method, A random sample of size n yields the following pairs of observations, (x1 , y1 ), (x2 , y2 ), . . . , (xn , yn ). The variance ratio F is calculated as in Test 16. Also, the sample correlation r is found from, �, , (xi − x̄)(yi − ȳ), �1 ., �, 2 2, 2, (yi − ȳ), (xi − x̄), , r=�, �, , The quotient, γF =, , F−1, 1, , [(F + 1)2 − 4r 2 F] 2, , provides a test statistic with degrees of freedom ν = n − 2. The critical values for this, test can be found in Table 6. Here the null hypothesis is σ12 = σ22 , when the population, correlation is not zero. Here F is greater than 1., Example, A researcher tests a sample panel of television viewers on their support for a particular, issue prior to a focus group, during which the issue is discussed in some detail. The panel, members are then asked the same questions after the discussion. The pre-discussion, view is x and the post-discussion view is y. The question, here, is ‘has the focus group, altered the variability of responses?’, We find the test statistic, F, is 0.796. Table 6 gives us a 5 per cent critical value, of 0.811. For this test, since the calculated value is greater than the critical value, we, do not reject the null hypothesis of no difference between variances. Hence the focus, group has not altered the variability of responses.

Page 56 :

THE TESTS, , 47, , Numerical calculation, �, �, n1 = n2 = 6,, x = 0.4,, x 2 = 0.30, s12 = 0.087, �, , y = 0.06,, , γF =, =, , �, , y2 = 1.78, s22 = 0.36, F =, , F−1, [(F, , + 1)2, , 1, , − 4r 2 F] 2, , =, , s22, s12, , = 4.14, r = 0.811, , 4.14 − 1, 1, , [(5.14)2 − 4r 2 .4.14] 2, , 3.14, 1, , [26.42 − 16.56 × 0.658] 2, , = 0.796, , α = 0.05, ν = n − 2 = 4, r = 0.811 [Table 6]., Hence do not reject the hypothesis of no difference between variances., The null hypothesis σ12 = σ22 has to be reflected when the value of the test-statistic, equals or exceeds the critical value.

Page 57 :

48, , 100 STATISTICAL TESTS, , Test 18 Hotelling’s T 2 -test for two series of, population means, Object, To compare the results of two experiments, each of which yields a multivariate result., In other words, we wish to know if the mean pattern obtained from the first experiment, agrees with the mean pattern obtained for the second., Limitations, All the variables can be assumed to be independent of each other and all variables, follow a multivariate normal distribution. (The variables are usually correlated.), Method, Denote the results of the two experiments by subscripts A and B. For ease of description, we shall limit the number of variables to three and we shall call these x, y and z. The, number of observations is denoted by nA and nB for the two experiments. It is necessary, to solve the following three equations to find the statistics a, b and c:, a[(xx)A + (xx)B ] + b[(xy)A + (xy)B ] + c[(xz)A + (xz)B ], = (nA + nB − 2)(x̄A − x̄B ), a[(xy)A + (xy)B ] + b[(yy)A + (yy)B ] + c[(yz)A + (yz)B ], = (nA + nB − 2)(ȳA − ȳB ), a[(xz)A + (xz)B ] + b[(yz)A + (yz)B ] + c[(zz)A + (zz)B ], = (nA + nB − 2)(z̄A − z̄B ), �, �, (xA − x̄A )2 , (xy)A =, (xA − x̄A )(yA − ȳA ), and similar definitions, where (xx)A =, exist for other terms., Hotelling’s T 2 is defined as, T2 =, , n A nB, · {a(x̄A − x̄B ) + b(ȳA − ȳB ) + c(z̄A − z̄B )}, nA + n B, , and the test statistic is, F=, , nA + nB − p − 1 2, T, p(nA + nB − 2), , which follows an F-distribution with (p, nA + nB − p − 1) degrees of freedom. Here p, is the number of variables., Example, Two batteries of visual stimulus are applied in two experiments on young male and, female volunteer students. A researcher wishes to know if the multivariate pattern of

Page 58 :

THE TESTS, , 49, , responses is the same for males and females. The appropriate F statistic is computed, as 3.60 and compared with the tabulated value of 4.76 [Table 3]. Since the computed F, value is less than the critical F value the null hypothesis is of no difference between the, two multivariate patterns of stimulus. So the males and females do not differ in their, responses on the stimuli., Numerical calculation, nA = 6, nB = 4, DF = ν = 6 + 4 − 4 = 6, α = 0.05, (xx) = (xx)A + (xx)B = 19, (yy) = 30, (zz) = 18, (xy) = −6, ν1 = p = 3, (xz) = 1, (yz) = −7, x̄A = +7, x̄B = 4.5, ȳA = 8, ȳB = 6, z̄A = 6, z̄B = 5, The equations, 19a − 6b + c = 20, −6a + 30b − 7c = 16, a − 7b + 18c = 8, are satisfied by a = 1.320, b = 0.972, c = 0.749. Thus, 6×4, · (1.320 × 2.5 + 0.972 × 2 + 0.749 × 1) = 14.38, 10, 6, F=, × 14.38 = 3.60, 3×8, Critical value F3.6; 0.0 = 4.76 [Table 3]., Do not reject the null hypothesis., T2 =

Page 59 :

50, , 100 STATISTICAL TESTS, , Test 19 Discriminant test for the origin of a p-fold, sample, Object, To investigate the origin of one series of values for p random variates, when one of two, markedly different populations may have produced that particular series., Limitations, This test provides a decision rule which is closely related to Hotelling’s T 2 -test (Test, 18), hence is subject to the same limitations., Method, Using the notation of Hotelling’s T 2 -test, we may take samples from the two populations, and obtain two quantities, DA = ax̄A + bȳA + cz̄A, DB = ax̄B + bȳB + cz̄B, for the two populations. From the series for which the origin has to be traced we can, obtain a third quantity, DS = ax̄S + bȳS + cz̄S ., If DA −DS < DB −DS we say that the series belongs to population A, but if DA −DS >, DB − DS we conclude that population B produced the series under consideration., Example, A discriminant function is produced for a collection of pre-historic dog bones. A new, relic is found and the appropriate measurements are taken. There are two ancient populations of dog A or B to which the new bones could belong. To which population do, the new bones belong? This procedure is normally performed by statistical computer, software. The DA and DB values as well as the DS value are computed. The DS value, is closer to DA and so the new dog bone relic belongs to population A., Numerical calculation, a = 1.320, b = 0.972, c = 0.749, x̄A = 7, ȳA = 8, z̄A = 6, x̄B = 4.5, ȳB = 6, z̄B = 5, DA = 1.320 × 7 + 0.972 × 8 + 0.749 × 6 = 21.510, DB = 1.320 × 4.5 + 0.972 × 6 + 0.749 × 5 = 15.517, If x̄S = 6, ȳS = 6 and z̄S = 7, then, DS = 1.320 × 6 + 0.972 × 6 + 0.749 × 7 = 18.995, DA − DS = 21.510 − 18.995 = 2.515, DB − DS = 15.517 − 18.995 = −3.478, DS lies closer to DA . DS belongs to population A.

Page 60 :

THE TESTS, , 51, , Test 20 Fisher’s cumulant test for normality of a, population, Object, To investigate the significance of the difference between a frequency distribution based, on a given sample and a normal frequency distribution with the same mean and the, same variance., Limitations, The sample size should be large, say n > 50. If the two distributions do not have the, same mean and the same variance then the w/s-test (Test 33) can be used., Method, Sample moments can be calculated by, Mr =, , n, �, , xir, , or, , Mr =, , n, �, , xin fi, , i=1, , i=1, , where the xi are the interval midpoints in the case of grouped data and fi is the frequency., The first four sample cumulants (Fisher’s K-statistics) are, M1, n, nM2 − M12, K2 =, n(n − 1), , K1 =, , K3 =, , n2 M3 − 3nM2 M1 + 2M13, n(n − 1)(n − 2), , K4 =, , (n3 + n2 )M4 − 4(n2 + n)M3 M1 − 3(n2 − n)M22 + 12M2 M12 − 6M14, n(n − 1)(n − 2)(n − 3), , To test for skewness the test statistic is, u1 =, , K3, (K2 ), , 3, 2, , ×, , �n�1, 2, , 6, , which should follow a standard normal distribution., To test for kurtosis the test statistic is, u2 =, , � n �1, K4, 2, ×, 2, 24, (K2 ), , which should follow a standard normal distribution.

Page 61 :

52, , 100 STATISTICAL TESTS, , A combined test can be obtained using the test statistic, �, χ =, , K3, , 2, , 3, , (K2 ) 2, , ×, , �n�1, , �2, , 2, , 6, , �, , � n � 1 �2, K4, 2, +, ×, 24, (K2 )2, , which will approximately follow a χ 2 -distribution with two degrees of freedom., Example, Example A, , A large sample of 190 component measurements yields the following calculations (see, table). Do the sample data follow a normal distribution? The test for skewness is a u1, statistic of 0.473 and the critical value of the normal test statistic is 1.96. Since u1 is, less than this critical value we do not reject the null hypothesis of no difference. So for, skewness the data are similar to a normal distribution. For kurtosis we have u2 statistic, of 0.474 and, again, a critical value of 1.96. So, again, we accept the null hypothesis;, kurtosis is not significantly different from that of a normal distribution with the same, mean and variance. The combined test gives a calculated chi-squared value 0.449 which, is smaller than the 5 per cent critical value of 5.99. So we conclude that the data follow, a normal distribution., Example B, , We calculate the values of skewness and kurtosis together with their respective standard, deviations and produce:, u1 = skewness/s d = 0.477, u2 = kurtosis/s d = 0.480, Table 7 gives (for sample sizes 200 and 175) critical values for u1 of 0.282 to 0.301, and for u2 of 0.62 to 0.66. So, again, we accept the null hypothesis., Numerical calculation, Example A, , �, , �, �, f = n = 190,, fx = 151,, fx2 = 805,, �, �, fx 3 = 1837,, fx 4 = 10 753, i.e. M1 = 151, M2 = 805, M3 = 1837, M4 = 10 753, K2 =, , (190 × 805) − (151)2, = 3.624310, 190 × 189, , (190)2 × 1837 − 3 × 190 × 805 × 151 + 2(151)3, = 0.5799445, 190 × 189 × 188, 2 795 421 924, K4 =, = 2.214280, 190 × 189 × 188 × 187, K3 =

Page 62 :

THE TESTS, Test for skewness, , u1 =, , 0.579945, × 5.6273 = 0.08405 × 5.6273 = 0.473, √, 3.62431 3.624310, , The critical value at α = 0.05 is 1.96., Do not reject the null hypothesis [Table 1]., Test for kurtosis, , 2.214279, u2 =, ×, (3.62431)2, , �, , 190, 24, , �1, 2, , = 0.1686 × 2.813657 = 0.474, , The critical value at α = 0.05 is 1.96., Do not reject the null hypothesis [Table 1]., Combined test, , χ 2 = (0.473)2 + (0.474)2 = 0.2237 + 0.2250 = 0.449, which is smaller than the critical value 5.99 [Table 5]., Example B, , 0.579945, K3, √ =, √, K2 K2, 3.624310 3.624310, = 0.084052, , Let skewness = g1 =, , 2.214279, K4, =, = 0.168570, 2, 2, (3.624310), K2, �, 6n(n − 1), standard deviation σ (g1 ) =, (n − 2)(n + 1)(n + 3), �, √, 6 × 190 × 189, =, = 0.0310898 = 0.176323, 188 × 191 × 193, �, 24n(n − 1)2, standard deviation σ (g2 ) =, (n − 3)(n − 2)(n + 3)(n + 5), �, 24 × 190 × 1892, = 0.350872, =, 187 × 188 × 193 × 195, kurtosis = g2 =, , 0.084052, 0.168570, = 0.477, u2 =, = 0.480., 0.176323, 0.350872, Critical values for g1 lie between 0.282 (for 200) and 0.301 (for 175) [Table 7]., The right-side critical value for g2 lies between 0.62 and 0.66 [Table 7]., Hence the null hypothesis should not be rejected., , Here u1 =, , 53

Page 63 :